| Aspect | Internet | Intranet |

|---|---|---|

| Connectivity | Publicly accessible, global connectivity | Private network, limited to a specific organization |

| Accessibility | Open to the public | Restricted access within the organization |

| Security | Higher security concerns due to public access | Generally more secure, as it’s within a controlled environment |

| Purpose | Facilitates global communication and access | Supports internal communication and collaboration |

| Scope | Extensive, covers the entire globe | Limited to the organization’s premises |

| Speed | Speed can vary based on global network conditions | Typically faster due to localized infrastructure |

| Examples | World Wide Web (WWW), public websites | Internal portals, shared databases, communication platforms |

| Administration | Managed by multiple entities and ISPs | Controlled and administered by the organization |

| Cost | Usage may involve costs for bandwidth and hosting | Costs associated with setup and maintenance, usually less expensive |

| Independence | Independent of any single organization | Tied to the specific organization’s needs |

Nuts-and-Bolts Description

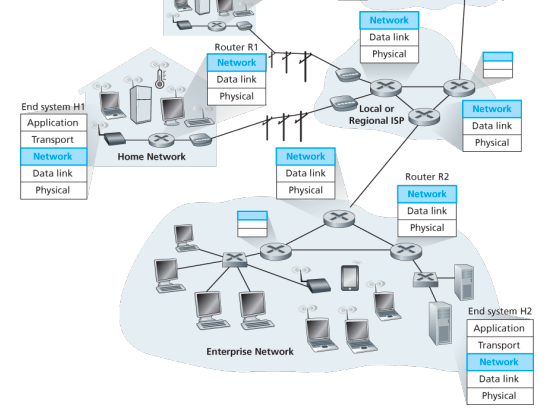

Internet is a network, I know, but of what? The Internet is a computer network that interconnects billions of computing devices throughout the world. Increasingly, nontraditional internet things such as laptops, smartphones, tablets, TVs, gaming consoles, thermostats, home appliances, watches, eye glasses are being connected to the Internet. In Internet jargon, all of these devices are called hosts or end systems

End systems: End systems are connected together by a network of communication links and packet switches. There are many types of communication links which are made up of different types of physical media, including coaxial cable, copper wire, optical fiber, and radio spectrum. Packet switches come in many shapes and flavors, but the two most prominent types in today’s Internet are routers and link-layer switches. Different links can transmit data at different rates, with the transmission rate of a link measured in bits/second.

Communication links: When one end system has data to send to another end system, the sending end system segments the data and adds header bytes to each segment. The resulting packages of information, known as packets in the jargon of computer networks, are then sent through the network to the destination end system, where they are reassembled into the original data.

Tell me more about internals of what’s connecting end systems. End systems access the Internet through Internet Service Providers (ISPs), including residential ISPs such as local cable or telephone companies; corporate ISPs; university ISPs; ISPs that provide WiFi access in airports, hotels, coffee shops, and other public places; and cellular data ISPs, providing mobile access to our smartphones and other devices. Each ISP is in itself a network of packet switches and communication links. ISPs provide a variety of types of network access to the end systems, including residential broadband access such as cable modem or DSL, high-speed local area network access, and mobile wireless access. ISPs also provide Internet access to content providers, connecting Web sites and video servers directly to the Internet.

How are these all end systems and ISPs in coordination? The Internet is all about connecting end systems to each other, so the ISPs that provide access to end systems must also be interconnected. These lower-tier ISPs are interconnected through national and international upper-tier ISPs such as Level 3 Communications, AT&T, Sprint, and NTT. An upper-tier ISP consists of high-speed routers interconnected with high-speed fiber-optic links. Each ISP network, whether upper-tier or lower-tier, is managed independently, runs the IP protocol, and conforms to certain naming and address conventions.

Services Description

In addition to traditional applications such as e-mail and Web surfing, Internet applications include mobile smartphone and tablet applications, including Internet messaging, mapping with real-time road-traffic information, music streaming from the cloud, movie and television streaming, online social networks, video conferencing, multi-person games, and location-based recommendation systems.

The applications are said to be distributed applications, since they involve multiple end systems that exchange data with each other.

End systems attached to the Internet provide a socket interface that specifies how a program running on one end system asks the Internet infrastructure to deliver data to a specific destination program running on another end system. This Internet socket interface is a set of rules that the sending program must follow so that the Internet can deliver the data to the destination program.

The Network Edge

End systems are also referred to as hosts because they host that is, run application programs such as a Web browser program, a Web server program, an e-mail client program, or an e-mail server program. Hosts are sometimes further divided into two categories: clients and servers. Informally, clients tend to be desktop and mobile PCs, smartphones, and so on, whereas servers tend to be more powerful machines that store and distribute Web pages, stream video, relay e-mail, and so on. Today, most of the servers from which we receive search results, e-mail, Web pages, and videos reside in large data centers.

Having considered the applications and end systems at the edge of the network, the network that physically connects an end system to the first router (also known as the “edge router”) on a path from the end system to any other distant end system are the access networks.

The access ISP does not have to be a telco or cable company; instead it can be, for example, a university providing Internet access to student, staff and faculty. But connecting end users and content providers into an access ISP is only a small piece of solving the puzzle of connecting the billions of end systems that make up the Internet. To complete this puzzle, the access ISPs themselves must be interconnected which is done by creating a network of networks.

(The Edge Network) (see: conversion techniques, information theory;)

What is even a protocol? All activity in the Internet that involves two or more communicating remote entities is governed by a protocol. For example, hardware-implemented protocols in two physically connected computers control the flow of bits on the wire between the two network interface cards; congestion-control protocols in end systems control the rate at which packets are transmitted between sender and receiver; protocols in routers determine a packet’s path from source to destination.

A protocol defines the format and the order of messages exchanged between two or more communicating entities, as well as the actions taken on the transmission and/or receipt of a message or other event.

The Network Core

Having examined the Internet’s edge, now delve more deeply inside the network core - the mesh of packet switches (see switching:) and links that interconnects the Internet’s end systems. Much of evolution of Internet is driven by economics and national policy rather than by performance considerations. Let’s incrementally build a series of network structures, with each new structure being a better approximation of the complex internet we have today.

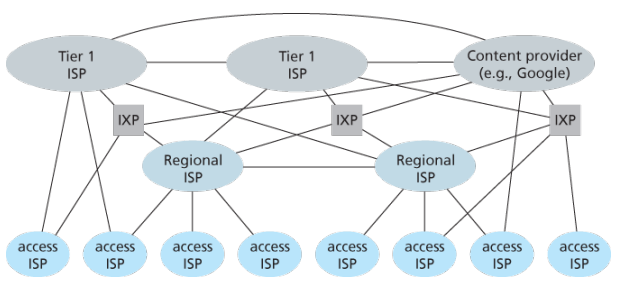

Naive approach (mesh ISPs) One naive approach would be to have each access ISP directly connect with every other access ISP, such a mesh design is, of course, much too costly for the access ISPs, as it would require each access ISP to have a separate communication link to each of the hundreds of thousands of other access ISPs all over the world.

Structure 1 (start ISPs) Our first network structure, interconnects all of the access ISPs with a single global transit ISP, which is a network of routers and communication links that not only spans the globe, but also has at least one router near each of hundreds of thousands of access ISPs. It would be very costly for the global ISP to build such an extensive network. To be profitable, it would naturally charge each of the access ISPs for connectivity, with the pricing reflecting the amount of traffic an access ISP exchanges with the global ISP.

Structure 2 Now If some company builds and operates a global transit ISP that is profitable then it is natural for other companies to build their own global transit ISPs and compete with the original global transit ISP which leads to another structure that consists of hundreds of thousands of access ISPs and multiple global transit ISPs. However, the global transit ISPs themselves must interconnect: otherwise access ISPs connected to one of the global transit providers would not be able to communicate with access ISPs connected to other global transit providers. This is a two-tier hierarchy with global transit providers residing at the top tier and access ISPs at the bottom tier. This assumes that global transit ISPs are not only capable of getting close to each and every access ISP, but also find it economically desirable to do so.

Structure 3 In reality, although some ISPs do have impressive global coverage and do directly connect with many access ISPs, no ISP has presence in each and every city in the world. Instead, in any given region, there may be a regional ISP to which the access ISPs in the region connect. Each regional ISP then connects to tier-1 ISPs. Tier-1 ISPs are similar to our (imaginary) global transit ISP; but tier-1 ISPs, which actually do exist, do not have a presence in every city in the world. There are approximately a dozen tier-1 ISPs, including Level 3 Communications, AT&T, Sprint, and NTT. Each regional ISP then connects to tier-1 ISPs. An access ISP can also connect directly to a tier-1 ISP, in which case it pays the tier-1 ISP. To further complicate matters, in some regions, there may be a larger regional ISP (possibly spanning an entire country) to which the smaller regional ISPs in that region connect; the larger regional ISP then connects to a tier-1 ISP.

Structure 4 The amount that a customer ISP pays a provider ISP reflects the amount of traffic it exchanges with the provider. To reduce these costs, a pair of nearby ISPs at the same level of the hierarchy can peer, that is, they can directly connect their networks together so that all the traffic between them passes over the direct connection rather than through upstream intermediaries. When two ISPs peer, it is typically settlement-free, that is, neither ISP pays the other. As noted earlier, tier-1 ISPs also peer with one another, settlement-free. Along these same lines, a third-party company can create an Internet Exchange Point (IXP), which is a meeting point where multiple ISPs can peer together. An IXP is typically in a stand-alone building with its own switches. There are over 400 IXPs in the Internet today.

Structure 5 We now finally arrive at Network Structure 5, which describes today’s Internet builds on top of Network Structure 4 by adding content-provider networks. Google is currently one of the leading examples of such a content-provider network. The Google data centers are all interconnected via Google’s private TCP/IP network, which spans the entire globe but is nevertheless separate from the public Internet. Importantly, the Google private network only carries traffic to/from Google servers. The Google private network attempts to “bypass” the upper tiers of the Internet by peering (settlement free) with lower-tier ISPs, either by directly connecting with them or by connecting with them at IXPs. However, because many access ISPs can still only be reached by transiting through tier-1 networks, the Google network also connects to tier-1 ISPs, and pays those ISPs for the traffic it exchanges with them. By creating its own network, a content provider not only reduces its payments to upper-tier ISPs, but also has greater control of how its services are ultimately delivered to end users.

Network Standardization (Who is Who?)

The legal status of the world’s telephone companies varies considerably from country to country. At one extreme is the United States, which has over 2000 separate, privately owned telephone companies. At the other extreme are countries in which the national government has a complete monopoly on all communication, including the mail, telegraph, telephone, and often radio and television.

With all these different suppliers of services, there is clearly a need to provide compatibility on a worldwide scale to ensure that people (and computers) in one country can call their counterparts in another one. In 1865, representatives from many European governments met to form the predecessor to today’s ITU. Its job was to standardize international telecommunications, which in those days meant telegraphy. In 1947, ITU became an agency of the United Nations.

International standards are produced by ISO, a voluntary non-treaty organization founded in 1946. Its members are the national standards organizations of the 157 members countries. On issues of telecommunication standards, ISO and ITU-T often cooperate (ISO is a member of ITU-T) o avoid the irony of two official and mutually incompatible international standards.

NIST (National Institute of Standards and Technology) is part of the U.S. Department of Commerce. It used to be called the National Bureau of Standards. It issues standards that are mandatory for purchases made by the U.S. Government, except for those of the Department of Defense, which defines its own standards.

Another major player in the standards world is IEEE (Institute of Electrical and Electronics Engineers), the largest professional organization in the world. In addition to publishing scores of journals and running hundreds of conferences each year, IEEE has a standardization group that develops standards in the area of electrical engineering and computing.

When the ARPANET was set up, DoD created an informal committee to oversee it. In 1983, the committee was renamed the Internet Activities Board (IAB). Each of the approximately ten members of the IAB headed a task force on some issue of importance. The IAB met several times a year to discuss results and to give feedback to the DoD and NSF, which were providing most of the funding at this time. Communication was done by a series of technical reports called RFCs (Request For Comments).

By 1989, the Internet had grown so large that this highly informal style no longer worked. Many vendors by then offered TCP/IP products and did not want to change them just because ten researchers had thought of a better idea. In the summer of 1989, the IAB was reorganized again. The researchers were moved to the IRTF (Internet Research Task Force), which was made subsidiary to IAB, along with the IETF (Internet Engineering Task Force).

For Web standards, the World Wide Web Consortium (W3C) develops protocols and guidelines to facilitate the long-term growth of the Web. It is an industry consortium led by Tim Berners-Lee and set up in 1994 as the Web really begun to take off.

RFC in depth:

A RFC is a publication in a series from the principal technical development and standards-setting bodies for the Internet, most prominently the IETF. An RFC is authored by individuals or groups of engineers and computer scientists in the form of a memorandum describing methods, behaviors, research, or innovations applicable to the working of the Internet and Internet-connected systems. It is submitted either for peer review or to convey new concepts, information, or occasionally, engineering humor.

- The IETF adopts some of the proposal published as RFCs as Internet Standards. However, many RFCs are informational or experimental in nature and are not standards.

- The official source for RFCs on WWW is the RFC Editor.

- The RFC Editor assigns RFC a serial number. Once assigned a number and published, an RFC is never rescinded or modified; if the document requires amendments, the authors publish a revised document. Therefore, some RFCs supersede others; the superseded RFCs are said to be deprecated, obsolete, or obsoleted by the superseding RFC.

The RFC series contains three sub-series for IETF RFCs:

- BCP

- FYI

- STD

There are five streams of RFCs: IETF, IRTF, IAB, independent submission and Editorial.

Each RFC is assigned a designation with regard to status within the Internet standardization process. This status is one of the following:

- Informational,

- Experimental,

- BCP,

- Standards Track,

- Historic

Example:

- IPv4 RFC 791 “Internet Protocol”

- TCP RFC 793 “Transmission Control Protocol”

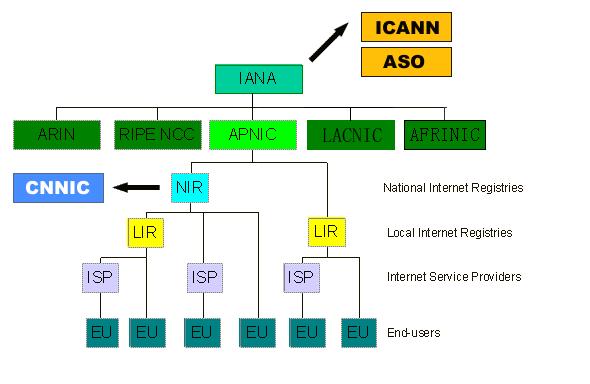

ICANN

The Internet Corporation for Assigned Names and Numbers (ICANN) is an American multistakeholder group and nonprofit organization responsible for coordinating the maintenance and procedures of several databases related to the namespaces and numerical spaces of the Internet, ensuring network’s stable and secure operation.

ICANN performs the actual technical maintenance work of the Central Internet Address pools and DNS root zone registries pursuant to the Internet Assigned Numbers Authority (IANA) function contract.

Much of its work has concerned the Internet’s global Domain Name System (DNS), including policy development for internationalization of the DNS, introduction of new generic top level domains (TLDs), and the operation of root name servers.

DNS Management: ICANN oversees the global DNS, ensuring the stable and secure operation of the system. This involves managing the allocation of domain names and IP addresses, as well as the coordination of root server systems

Accreditation of Registrars: ICANN accredits domain registrars, ensuring they comply with standards and policies. Registrars act as intermediaries between individuals or organizations and the domain registration process.

IANA Functions Oversight: ICANN oversees the Internet Assigned Numbers Authority (IANA) functions, which includes

- management of global IP address space,

- assignment of protocol parameters,

- and maintenance of the DNS root zone.

ICANN plays a crucial role in promoting and enhancing the security and stability of the DNS. This includes initiatives to combat cyber threats, support the implementation of DNSSEC (Domain Name System Security Extensions), and address emerging challenges.

Example: In 2013, the initial report of ICANN’s Expert Working Group has recommended that the present form of Whois, a utility that allows anyone to know who has registered a domain name on the Internet, should be abandoned.

In a long-running dispute, ICANN has so far declined to allow a Turkish company to purchase the .islam and .halal gTLDs.

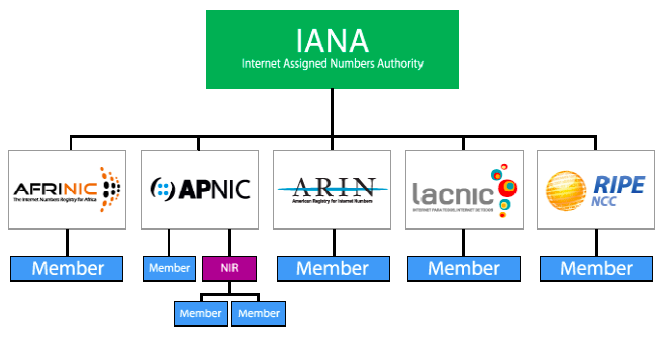

The Registry System:

Internet number resources are distributed globally according to a hierarchical registry system that has evolved over the past two decades. The graph shows how Internet number resources are distributed from the central IANA-managed pool to the five Regional Internet Registries and then onto their Members (Local Internet Registries, or LIRs). The IANA has authority over all number spaces used in the internet, including IP address space and AS numbers. IANA allocates public Internet address space to RIRs according to their established needs.

The duty of an RIR include the coordination and representation of the members in its region. Additional RIRs may be established in the future, although their number will remain relatively low. The RIRs work closely together to develop consistent policies and promote best current practice for the Internet.

-

LIRs are established under the authority of an RIR. LIRs are typically operated by Internet Service Providers and serve the customers of those ISPs. Other organizations such as large Enterprises can also operate LIRs.

-

An entity that uses IP address space for its network only and does not provide IP/ASN services to customers is called an End User. Strictly speaking, End Users are not part of the Internet Registry System. They do, however, play an important role with respect to the goals defined above. In order to achieve the conservation goal, for example, End Users should plan their networks to use a minimum amount of address space. They must document their addressing and deployment plans to the LIR and furnish any additional information required by the LIR for making assignment decisions. To achieve the aggregation goal, an End User should choose an appropriate LIR. End Users should be aware that changing ISPs may require replacing addresses in their networks.

-

In addition to these key players in the Internet Registry System, there are often consultants who set up and manage networks for End Users. The consultants may be the persons submitting a request for address space to an LIR on behalf of an End User. We refer to the person making the request for an End User as a requester, whether that person is employed by the organisation, or is simply acting on behalf of the organisation with respect to the address space request.

-

With regard to Internet number resources, IANA’s role is to allocate IP addresses and AS Numbers from the pools of unallocated resources to RIRs according to their needs, and to document assignments made by the IETF. When an RIR requires more addresses for allocation or assignment within its region, IANA makes an additional allocation to the RIR.

Backbones of Internet

Optical backbone:

Marine cables:

Satellites:

Teleports:

A telecommunications port is a satellite ground station with multiple parabolic antennas that functions as a hub connecting a satellite or geocentric orbital network with a terrestrial telecommunications network.

Domain Name System

Internet hosts are identified by IP addresses which is four bytes and has a rigid hierarchical structure. People rather prefer the more mnemonic host-name identifier, while routers prefer IP, in order to reconcile these preferences, need a directory service that translates hostnames to IP addresses.

The DNS is a collective term for a distributed database implemented in a hierarchy of DNS servers and an application-layer protocol that allows hosts to query the distribute database

The DNS servers are often UNIX machines running the Berkeley Internet Name Domain (BIND) software, which runs over UDP and uses port 53.

DNS is commonly employed by other application-layer protocols -including HTTP and SMTP to translate user-supplied hostnames to IP addresses. As an example, when a browser that is, HTTP client running on some user’s host requests the URL , in order for the user’s host to be able to send an HTTP request message to the Web server, the user’s host must first obtain the IP address of .

DNS provides few other important services in addition to translating hostnames to IP addresses:

-

Host alisaing: A host with a complicated hostname can have one or more alias names, for ex: a hostname such as could have, say, two aliases such as and , in this case is said to be canonical hostname. Alias hostnames, when present, are typically more mnemonic than canonical hostnames. DNS can be invoked by an application to obtain the canonical hostname for a supplied alias hostname as well as the IP address of the host.

-

Mail aliasing: The hostname of Yahoo mail server is more complicated and much less mnenomic than simply . DNS can be invoked by a mail application to obtain the canonical hostname for a supplied alias as well as the IP address of the host.

-

Load distribution: DNS is also used to perform load distribution among replicated servers such as replicated Web servers, for replicated Web servers. Busy sites, such as cnn.com , are replicated over multiple servers, with each server running on a different end system and each having a different IP address. For replicated Web servers, a set of IP addresses is thus associated with one canonical hostname. When clients make a DNS query for a name mapped to a set of addresses, the server responds with the entire set of IP addresses, but rotates the ordering of the addresses with each reply.

How DNS works for hostname-to-IP-address translation service?

When some applicaiton running in a user’s host needs to translate a hostname to an IP address, the application invokes the client side of DNS, specifying the hostname that needs to be translated. On many UNIX-based machines, is the function call that an application calls in order to perform the translation.

DNS in the user’s host then takes over, sending a query message into the network. All DNS query and replies are sent within UDP datagrams to port 53. After a delay ranging from milliseconds to seconds, DNS in the user’s host receives a DNS message that provides the desired mapping. This mapping is then passed to the invoking application.

The hierarchy of DNS servers?

A simple design for DNS would have one DNS server that contains all the mappings. Although the simplicity of this design is attractive, it is inappropriate for today’s Internet with its vast number of hosts.

A centralized database in a single DNS server simply does not scale, consequently DNS is distribued by design.

In order to deal with the issue of scale, the DNS uses a large number of servers, organized in a hierarchial fashion and distributed around the world. No single DNS server has all of the mappings for all of the hosts in the Internet. Instead the mappings are distributed across the DNS servers.

To a first approximation, there are three classes of DNS servers -root DNS servers, top-level doman (TLD) DNS, and authoritative DNS organized in a hierarchy. Suppose a DNS client wants to determine the IP address for the hostname amazon.com. The client first contacts one of the root servers, which return IP addresses for TLD servers for the top-level domain com. The client then contacts one of these TLD servers, which returns the IP address of an authorative server for amazon.com. Finally, the client contacts one of the authorative servers for amazon.com which returns the IP address for the hostname.

-

Root DNS servesr. There are over 400 root name serves scattered all over the world. These root name servers are managed by 13 different organizations. Root name servers provide the IP addresses of the TLD servers.

-

For each top-level domains, such as com, org, net, edu, gov, and all of the country top-level domain there is TLD server (or server cluster). The company Verisign Global Registry Services maintains the TLD servers for the com top-level domain. TLD servers provide IP for authoritative DNS servers.

-

Authoritative DNS servers. Every organization with publicly accessible hosts such as Web servers and mail servers on the Internet must provide publicly accessible DNS records that map the names of those hosts to IP addresses. An organization’s authoritative DNS server houses these DNS records. An organization can choose to implement its own authoritative DNS server to hold these records; alternatively, the organization can pay to have these records stored in an authoritative DNS server of some service provider.

There is an another important type of DNS server called the local DNS server, does not strictly belong to the hierarchy of servers but is nevertheless central to the DNS architecture.

Each ISP - such as a residential ISP or an institutional ISP - has a local DNS server (also called a default name server). When a host connects to an ISP, the ISP provides the host with the IP addresses of one or more of its local DNS servers typically through DHCP.

A host’s local DNS server is typically close to the host, for an institutional ISP, the local DNS server may be on the same LAN as the host; for a residential ISP, it is typically separated from the host by no more than a few routers.

Example: Suppose the host cse.nyu.edu desires the IP address of gaia.cs.umass.edu. NYU’s local DNS server is dns.nyu.edu and that an authoritative DNS server for gaia.cs.umass.edu is called dns.umass.edu.

1. The host cse.nyu.edu first sends a DNS query message to its local DNS server, dns.nyu.edu containing the hostname to be translated namely gaia.cs.umass.edu.

2. The local DNS server forwards the query message to root DNS server which takes note of the edu suffix and returns to the local DNS server a list of IP addresses for TLD servers responsible for edu.

3. The local DNS server then re-sends the query message to one of these TLD servers which takes note of the umass.edu suffix and responds with the IP address of the authoritative DNS server for the University of Massachusetts, namely, dns.umass.edu

4. Finally query is send directly to dns.umass.edu which responds with the IP address of gaia.cs.umass.edu

Eight DNS messages were sent, assuming that the TLD server knows the authoritative DNS server for the hostname, which is not always true, instead the TLD server may know only of an intermediate DNS server, which in turn knows the authoritative DNS server for the hostname.

(yesma bhannu parda intermediate authorative dns server hunxa rey, yei case ma ni dns.cs.umass.edu bhanni chai harek deparment ma bhako servers ko authorative huna payo, testai garera, yesko authorative arko kunai intermediate hunxa)

![[Screenshot from 2023-09-11 23-37-36.png]]

The query from the requesting host to the local DNS server is recursive, and the remaining queries are iterative.

DNS caching -DNS extensively exploits DNS caching in order to improve the delay performance and to reduce the number of DNS messages -In a query chain, when a DNS server receives a DNS reply, it can cache the mapping in its local memory -Because hosts and mappings between hostnames and IP addresses are by no means permanent, DNS servers discard cached information after a period of time often set to two days -A local DNS server can also cache the IP addresses of TLD servers, thereby allowing the local DNS server to bypass the root DNS servers in a query chain -As a result, root servers are bypassed for all but a very small fraction of DNS queries

DNS Records and Messages -The DNS servers that together implement the DNS distributed database store resource records (RRs), so each DNS reply message carries one or more resource records -A resource record is a four-tupel that contains the following fields:

(Name, Value, Type, TTL)

-TTL is the time to live of the resource record; it determines when a resource should be removed from a cache

-The meaning of Name and Value depend on Type:

1. If Type=A, then Name is a hostname and Value is the IP address for the hostname

2. If Type=Ns, then Name is a domain and Value is the hostname of an authorative DNS server that knows how to obtain the IP addresses for hosts in the domain

3. If Type=CNAME, then Value is a canoncial hostname for the alias hostname Name

4. If Type=MX, then Value is the canonical name of a mail server that has an alias hostname Name

-If a DNS server is authorative for a particular hostname, ten the DNS server will contain a Type A record for the hostname, even if it is not, may contain a Type A record in its cache

-If not authorative, then will contain a Type NS record for the domain that includes the hostname; along with it will also contain a Type A record that provides the IP address of the DNS server in the Value filed of the NS record (bhaneko agadi yo hostname ko lag authorative hosst yo ho ra tyo authorative host ko IP pani ta chaiyo ni sathi haru)

DNS Messages:

-There are only two kinds of DNS messages, both query and reply have the same format

-The first 12 bytes is the header section, which has a number of fields, first is a 16 bit number that identifies the query, which is copied into the reply message to a query, allowing the client to match received replies with sent queries

-

.....

-To send query directly from the host to some DNS server can be done with nslookup program

Inserting records into the DNS Database: -To register a domainname networkutopia.com at a registar which is a commerical entity that verifies the uniquess of the domain name, wchic enters the domain name into the DNS database, and collects a small fee from you for its services -There are many regisrars competing for customers, and the ICANN accredits the various registrars -When you register the domain name networkutopia.com with some registrar, you need to provide the registrar with the names and IP addresses of you rprimarly and secondary authorative DNS servers -Suppose the names and IP addresses are dns1.networkutopia.com, dns2.networkutopia.com, 212.2.212.1 and 212.212.212.2, for each of thse authorative DNS servers, the registrar would then make sure that a Type NS and Type A record are entered into the TLD com servers -Also need to make sure that the Type A resource record for your Web server and Type MX for your mail server are entered into your authorative DNS -Until recently, the conents of each DNS server were configured statically, for ex, from a configuration file created by a system manager -More recently, an UPDATE option has been added to the DNS protocl to allow data to be dynamically added or dleted from the database via DNS messages

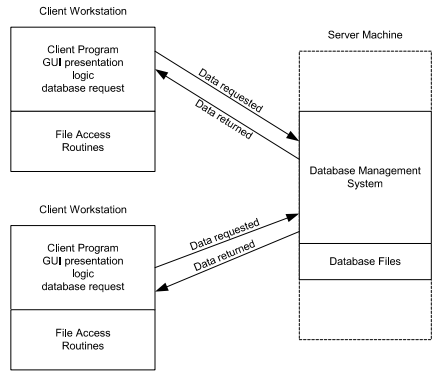

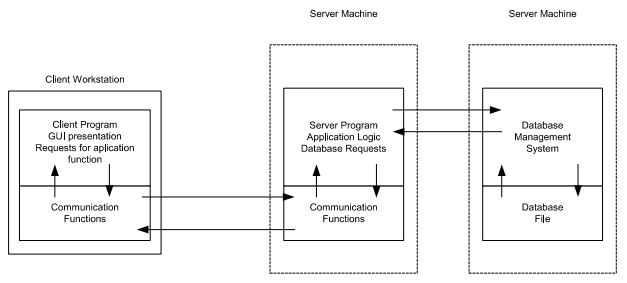

N - tier Client Server Architecture

The client-server model is a distributed application structure that partitions tasks or workloads between the providers of a resource or service, called servers, and service requesters, called clients. Often clients and servers communicate over a computer network on separate hardware, but both client and server may reside in the same system as well.

N-tier application architecture provides a model by which developers can create flexible and reusable applications. By segregating an application into tiers, developers acquire the option of modifying or adding a specific tier, instead of reworking the entire application.

A server host runs one or more server programs, which share their resources with clients. A client usually does not share any of its resources, but it requests content or service from a server. Clients, therefore, initiate communication sessions with servers, which await incoming requests. Examples of computer applications that use the client-server model are email, network printing, and the WWW.

Traditional systems: Users interact with these systems through terminals (keyboards and screens) that have no processing capability of their own; they simply send their input to the mainframe and display the results of processing. This model is highly centralized, with the mainframe at the core handling all computations, storage, and management tasks.

2-tier architecture:

Operational layers:

- Client layer (presentation tier): user interface layer where the application is accessed and interacted with by the user. It resides on the client side.

- Server layer (data tier): includes the database management system, where the data is stored, retrieved, and updated. It processes requests from the client layer and sends back the results.

Key points:

- Direct communication

- Simplicity

- Scalability issues: adding more clients or increasing database size can significantly impact performance

- Maintenance: changes in the database structure can require updates to the client-side application

3-tier architecture:

Operational layers:

- Presentation tier client: user interface where the application is accessed by the user, solely focuses on presenting the data to the user. The presentation layer displays information related to services such as browsing merchandise, purchasing and shopping cart contents. It communicates with other tiers by which it puts out the results to browser/client tier and all other tier in network.

- Application tier: acts as an intermediary between the presentation tier and the data tier, processes business logic, computations and makes logical decisions. It controls an application’s functionality by performing detailed processing.

- Data tier: consists of DBMS

Key points:

- Enhance scalability

- Improved security

- Flexibility

- Complexity

The Web and HTTP

The Internet was essentially unknown outside of the academic and research communities, the Web was the first Internet application that caught the general public’s eye.

Most appealing of the Web is operating on demand, users receive what they want, when they want it, which is unlike traditional broadcast radio and television, which force users to tune in when the content provider makes the content available.

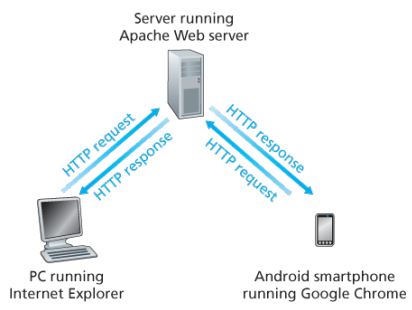

The HTTP is at the heart of the Web. HTTP is implemented in two programs: a client program and a server program, executing on different end systems, talk to each other by exchanging HTTP messages.

A web page consists of objects, an object is simply a file such as an HTML file, a JPEG image, or a video clip -that is addressable by a single URL. Most web pages consist of a base HTML file and several referenced objects. For example, if a web page consists HTML text and five JPEG, then the web page has six objects, the base HTML plus other fives images.

Each URL has two components: the hostname of the server that houses the object and the object’s path name. For example: has as a hostname and for a path name.

A segway:

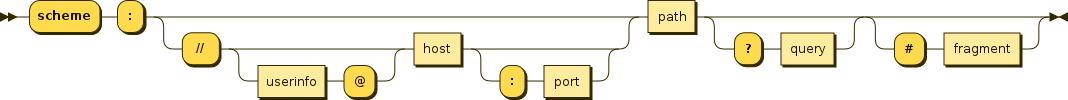

URIs and URLs have a shared history. Tim Berners-Lee’s proposals for hypertext implicitly introduced the idea of URL as a short string representing resource that is the target of a hyperlink. Over the next three and a half years, as the WWW core technologies of HTML, HTTP, and Web browsers developed, a need to distinguish a string that provided an address for a resource from a string that merely named a resource emerged. Although not yet formally defined, the term Uniform Resource Locator came to represent the former, and the more contentious Uniform Resource Name came to represent the latter.

Every HTTP URL conforms to the syntax of a generic URI. The URI generic syntax consists of five components organized hierarchically in order of decreasing significance from left to right:

URI = scheme ":" ["//" authority] path ["?" query] ["#" fragment]

The authority component consists of subcomponents:

authority = [userinfo "@"] host [":" port]

Back: Web browsers implement the client side of HTTP. Web servers implement the server side of HTTP, house Web objects addressable by a URL. Popular Web servers include Apache and Microsoft Information Sever.

HTTP defines how Web clients request Web pages (or objects?) from Web servers and how servers transfer Web pages to clients. When a user requests a Web page the browser sends HTTP request messages for the objects in the page to the server, the server receives the requests and responds with HTTP response messages that contain the objects.

HTTP uses TCP as its underlying transport protocol rather than running on top of UDP. The HTTP client initiates a TCP connection with the server. Once the connection is established, the browser and the server processes access TCP through their socket interfaces.

Once the client sends a message into its socket interface, the message is out of the client’s hands and is in the hands of TCP which implies that the message eventually arrives intact at the server. The server sends requested files to clients without storing any state information about the client, it completely forgets what it did earlier, HTTP is said to be a stateless protocol.

Non persistent vs persistent:

In many Internet applications, the client and server communicate for an extended period of time, with the client making a series of requests and the server responding to each of the requests. Depending on the application and on how the application is being used, the series of requests may be made back-to-back, periodically at regular intervals, or intermittently, the application developer needs to make an important decision -should each request/response pair be send over a separate (non-persistent) or same (persistent) TCP connection? Although HTTP uses persistent connections in its default mode, HTTP clients and servers can be configured to use non-persistent connections instead.

Non-persistent connections:

- Steps of transferring a web page that consists of a base HTML file and 10 JPEG images and all 11 of these objects reside on the same server

http://www.someschool.edu/someDepartment/home.index. - HTTP client process initiates a TCP connection to the server

someschool.eduon port number 80, which is the default port number for HTTP, associated with the TCP connection, there will be a socket at the client and a socket at the server. - Client sends a HTTP request that includes the path name /someDeparment/home.index

- Server process receives the request message via its socket, retrieves the object from its storage, encapsulates the object in an HTTP response message, and sends to the client via its socket

- HTTP server process tells TCP to close the TCP connection but TCP doesnot actually terminate the connection until it knows for sure that the client has received the response message intact

- HTTP client receives the response message, the TCP connection terminates, the message indicates that the encapsulated object is an HTML file, the client extracts the file from the response message, examines the HTML file, and finds references to the 10 JPEG objects

- The first four steps are then repeated for each of the referenced JPEG objects.

Note that TCP connection transports exactly one request message and one response message, hence 11 TCP connections are generated. Users can configure modern browsers to control the degree of parallelism, in their default modes, most browsers open 5 to 10 parallel TCP connections and each of these connections handles one request-response transaction.

To initiate a TCP connection involves three way handshake, the client sends a small TCP segment to the sever, the server acknowledges and responds with a small TCP segment, and finally, the client acknowledges back to the server with the HTTP request message. Thus, roughly, the total response time is two round-trip times plus the transmission time at the server of the HTML file.

Persistent connections:

- For each of non-persistent connections, TCP buffers must be allocated and TCP variables must be kept in both the client and server, can be a significant burden on a Web server, which may be serving requests from hundreds of different clients

- Each object suffers a delivery delay of two RTTs

- With HTTP 1.1 persistent connections, the server leaves the TCP connection open after sending a response, subsequent requests and responses between the same client and server can be sent over the same connection

- Multiple web pages residing on the same server can be sent from the server to the same client over a single persistent TCP connection, can be made back to back without waiting for replies to pending requests (pipelining)

- Typically HTTP server closes a connection when it isnt used for a certain time (a configurable timeout interval)

- HTTP/2 allows multiple requests and replies to be interleaved in the same connection, and a mechanism for priotizing HTTP message requests and replies within this connection

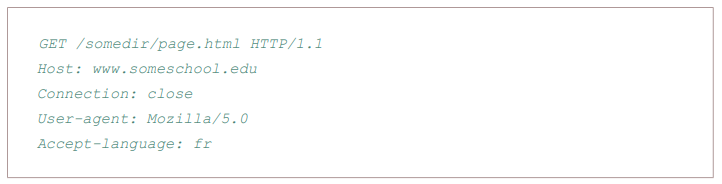

HTTP Message format:

There are two types of HTTP messages, request messages and response messages

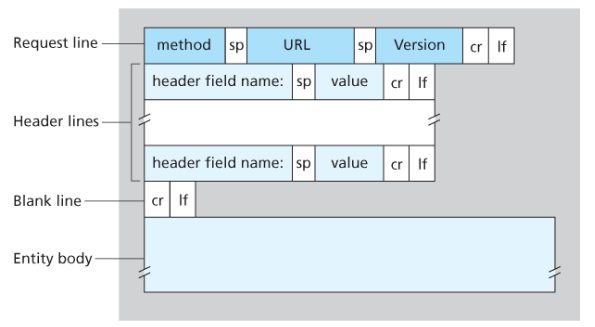

General format of HTTP request message:

Properties

- Message is written in ordinary ASCII text.

- Message consists of five lines, each followed by a carriage return and a line feed, the last line is followed by an additional carriage return and line feed

- Although this particular has five lines, a request message can have many more lines or as few as one line

- The first line of an HTTP request is the request line; subsequents are header lines

- The request line has three fields: the method field, the URL field, and the HTTP version field

- The method field can take on several different values, including GET, POST, HEAD, PUT, and DELETE

- Great majority of HTTP request message use the GET method, which is used when the browser requests an object, with the requested object identified in the URL field

- The header line Host specifies the host on which the object resides, which seems unnecessary, as there is already a TCP connection in place to the host but the information provided by the host header is required by Web proxy caches.

- By providing Connection header line, the browser is telling the server that it does not want to bother with persistent connections; it wants the server to close the connection after sending the requested object.

- The User-agent: header specifies the user agent, that is, browser type that is making the request to the server which is useful because the server can actually send different versions of same object to different types of user agents

- The Accept-language indicates the version of the object that the user prefers

- The entity body is empty with the GET method, but is used with the POST method

- An HTTP client often uses the POST method when the users fills out a form -for example, when a user provides search words to a search engine, with a POST message, the user is still requesting a Web page from the server, but the specific contents of the Web page depends on what the user entered into the form fields

- A request generated with a form does not necessarily use the POST method, instead HTML forms often use the GET method and include the inputted data in the form fields in the requested URL, if a form uses the GET method, has a field with input ‘monkey’, then the URL will have structure …/search?monkeys

- HEAD method is similar to GET method, when a server requests with the HEAD method, it responds with an HTTP message but leaves out the requested object, often used for debugging

- The PUT method is often used in conjuction with Web publishing tools, allows a user to upload an object to a specific directory on a Web server, used by applications that need to upload objects to Web servers

- The DELETE method allows a user, or an application to delete an object on a Web server

Types:

- GET: Requests a representation of the specified resource. GET requests should only retrieve data and have no effect.

- POST: Used to submit an entity to the specified resource, often causing change in state or side effects on the server.

- PUT: Replaces a current representations of the target resource with the request payload.

- DELETE: Removes the specified resource.

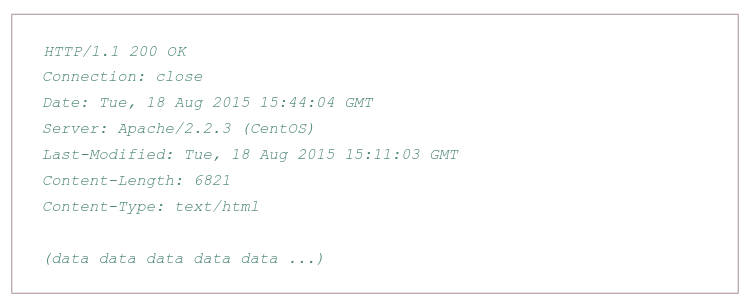

Typical HTTP response message:

Properties:

- Has three sections: an initial status line, six header lines, and then the entity body, which is the meat of the message which contains the requested object iself

- The status line has three fields: the protocol version field, a status code, and a corresponding status message, and a corresponding status messagse

- The status code and associated phrase indicate result of the request, some common status codes and associated phrases:

- 200 OK: Request succeeded and the information is returned in the response

- 301 Moved Permanently: Requested object has been permanently moved; the new URL is specified in Location: header of the response message, client software will automatically retrieve the new URL

- 400 Bad Request: Generic error code indicating that the request could be understood by the server

- 404 Not Found: Requested document does not exist on this server

- 505 HTTP Version Not Supported

- The server uses the Connection: close header line to tell the client that it is going to close the TCP connection after sending the message

- The Date: header line indicates the time and date when the HTTP response was created and sent by the server, which is not when the object was created or last modified

- The Server indicates that the message was generated by an Apache Web server; it is analgous to User-agent header in the HTTP request message

- The Last-Modified is critical for object caching, both in the local client and in network cache servers also known as proxy servers

- The Content-Length: header indicates the number of bytes in the object being sent

- The Content-Type: header line indicates that the object in the entity body is HTML text which is officially indicated by the Content-Type: header and not by the file extension

Real and web browser packets: First telnet into your favourite web server, then type in a one-line request message for some object that is housed on the server

telnet pcampus.edu.np 80

GET /index.html HTTP/2.0

Host: pcampus.edu.np

After carriage return twice, opens a TCP connection to port 80 of the host gais.cs.umass.edu and then sends the HTTP request message

- The HTTP specification defines many, many more header lines that can be inserted by browsers, Web servers, and network cache servers

- A web browser will generate header lines as a function of the browser type and version, the user configuration of the browser, and whether the browser currently has a cached, but possibly out-of-date version of the object, web servers behave similarly

HTTP/1.1

This version came up in early 1997, few months after its predecessor. The main changes were:

- Persistent TCP connections (keep-alive), saving machine and network resources. In the previous version, a new TCP connection was opened for each request and closed after the response

- ‘Host’ header, allowing more than one server under the same IP.

- Header conventions for encoding, cache, language and MIME type.

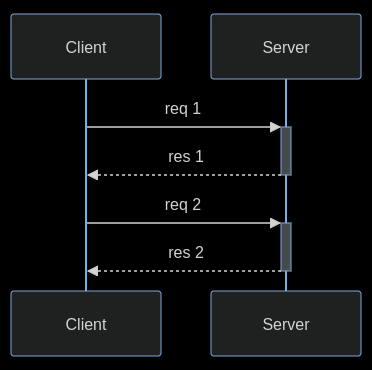

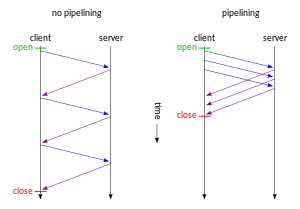

In HTTP/1.1 two requests cannot ride together the same TCP connection - it is necessary that the first one ends for the subsequent to being. This is called head-of-line blocking. In the diagram below, request 2 cannot be sent until response 1 arrives, considering that only one TCP connection is used.

HTTP pipelining is a feature of HTTP/1.1 which allows multiple HTTP requests to be sent over a single TCP connection without waiting for the corresponding responses. The technique was superseded by multiplexing via HTTP/2.

HTTP/2

In 2015, after many years of observation and studies on the performance of the Internet, the HTTP/2 was proposed and created, based on Google’s SPDY.

The working group charter mentions several goals and issues of concern:

- Create a negotiation mechanism that allows clients and servers to elect to use HTTP/1.1, 2.0, or potentially other non-HTTP protocols

- Maintain high-level compatibility with HTTP/1.1

- Decrease latency to improve page load speed in web browsers by considering:

- Data compression of HTTP headers (HPACK compression)

- Prioritization of requests

- Message format: binary?

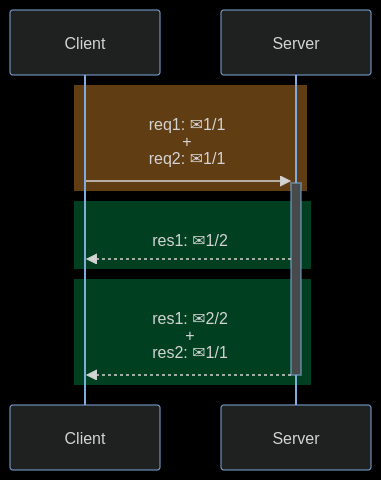

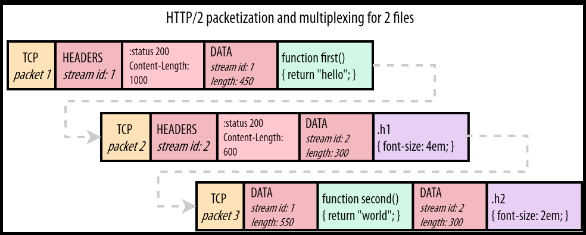

- Multiplexing multiple requests over a single TCP connection (fixing the HTTP-transaction-level head-of-line blocking problem in HTTP 1.x even when HTTP pipelining is used). HOL blocking in computer networks is a performance limiting phenomenon that occurs when a line of packets is held up in a queue by a first packet.

- Server push: The server can send additional information needed for a request before it is requested?

How HOL is solved?

HTTP/2 streams are divided into frames, each one containing: the frame type, the stream that it belongs to, and the length in bytes. In the diagram below, a colored rectangle is a TCP packet and ✉ is a HTTP/2 frame inside it. The first and the third TCP packets carry frames of different streams.

The image below shows how frames go inside a TCP packet. Stream 1 carries a HTTP response for a Javascript file and stream 2 carries a HTTP response for a CSS file.

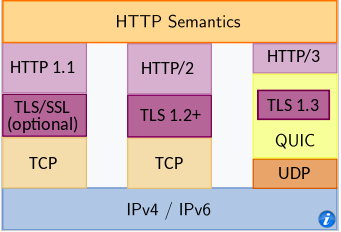

HTTP/3

HTTP/3 is a third major version of HTTP used to exchange information on the WWW, complementing widely-deployed HTTP/1.1 and HTTP/2. Unlike previous versions which relied on well-established TCP, HTTP/3 uses QUIC, a multiplexed transport protocol built on UDP.

HTTP/3 uses similar semantics compared to earlier revisions of the protocol, including the same request methods, status codes, and message fields, but encodes and maintains session state differently. However, partially due to the protocol’s adoption of QUIC, HTTP/3 has lower latency and loads more quickly in real-world usage when compared with previous versions: in some cases over 3× faster than with HTTP/1.1

It proposes:

- fewer packets roundtrips to establish connection and TLS authentication

- more resilient connections regarding packet losses

- to solve the head-of-line blocking that exists in TCP and TLS

HTTP/2 solves the HTTP head-of-line blocking, but, this problem also happens with TCP and TLS. TCP understands that the data it needs to send is a contiguous sequence of packets, and if any packet is lost, it must be resent, in order to preserve information integrity. With TCP, subsequent packets cannot be sent until the lost packet is successfully resent to the destination.

To solve TCP’s head-of-line blocking, QUIC decided to use UDP for its transport protocol, because UDP does not care for guarantees of arrival. The responsibility of data integrity, that in TCP is part of the transport layer, is moved in QUIC to the application layer, and the frames of a message can arrive out of order, without blocking unrelated streams.

QUIC allows for a quicker connection establishment compared to traditional TCP handshakes.

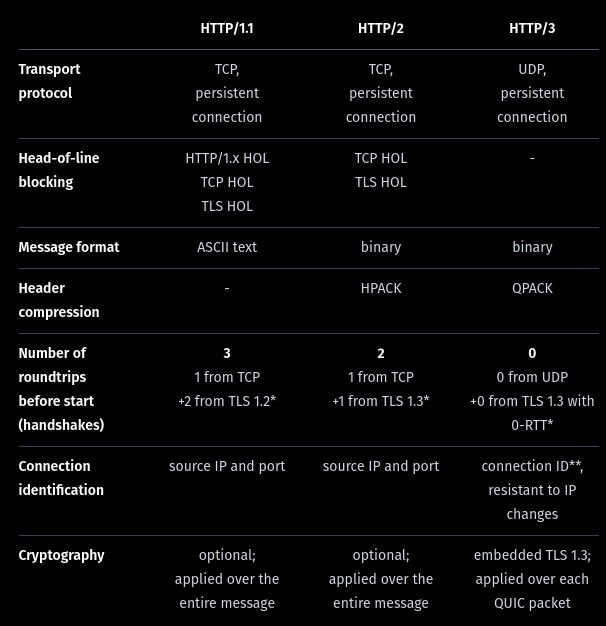

Differences:

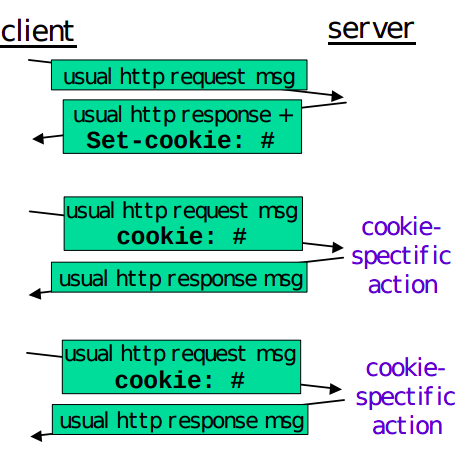

User-server interaction/ cookies:

As HTTP server is stateless, simplifies server design and has permitted engineers to develop high-performance. Web servers that can handle thousands of simultaneous TCP connections. Often desirable for a site to identify users, for these purposes, HTTP uses cookies, which allow sites to keep track of users.

Cooking technology has four components:

- a cookie header line in the HTTP response message

- a cookie header line in the HTTP request message

- a cookie file kept on the user’s end system and managed by the user’s browser

- a back-end database at the Web site

How cookie works?

- When the request comes into the Amazon web server for the first time, the server creates a unique ID and creates an entry in its backend database that is indexed by the ID

- The server then responds back with HTTP response including a Set-cookie header which includes the ID

- When browser receives the HTTP response message, it sees the Set-cookie header, then the browser appends a line to the special cookie file which includes the hostname of the server and the ID in the Set-cookie header

- As the browser continues to request a Web page for the site, puts a cookie header line that includes the ID in the HTTP request

- If the user has registered providing full name, email, then Web server can include this information in database, thereby associating personal information with identification number

Web caching

(more about proxy servers later)

A HTTP proxy server is a network entity that satisfies HTTP requests on the behalf of an origin Web server, which has its own disk storage and keeps copies of recently requested objects in this storage.

A web browser can be configured so that all of the user’s HTTP requests are first directed to the Web cache.

- The browser establishes a TCP connection to the Web cache and sends an HTTP request for the object to the Web cache.

- The Web cache checks to see if it has a copy of the object stored locally, if it does, returns the object within an HTTP response message to the client browser

- If does not, the Web cache opens a TCP connection to the server, then sends an HTTP request for the object into the cache-to-server TCP connection.

Typically is purchase and installed by an ISP

Can substantially reduce the response time for a client request, particularly if the bottleneck bandwidth between the client and the origin server is much less than the bottleneck bandwidth between the client and the cache

Can substantially reduce traffic on an institution’s access link to the Internet

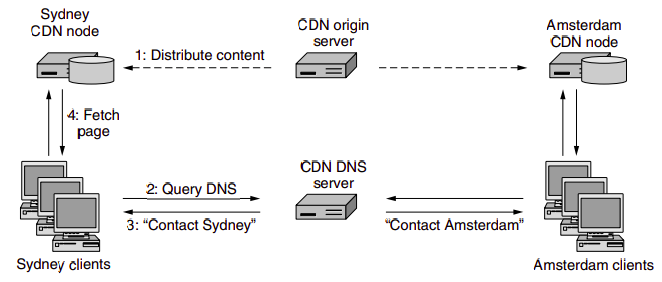

The Content Distribution Networks install many geographically distributed caches throughout the internet, thereby localizing much of the traffic

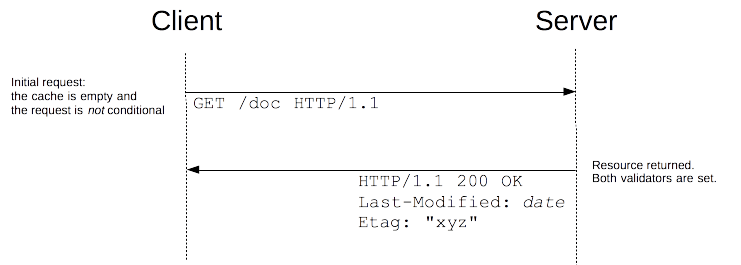

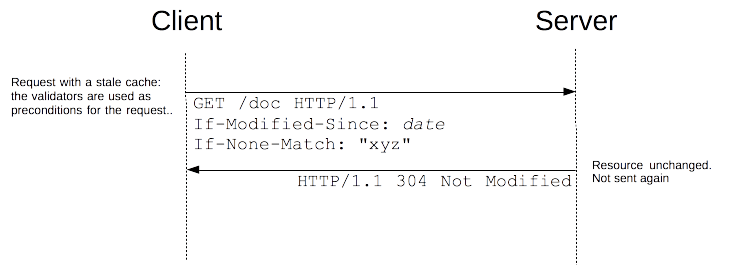

The Conditional GET:

- Caching can reduce user-perceived response times, introduces a new problem - the copy of an object residing may be stale, may have been modified since the copy was cached at the client.

- HTTP has a mechanism that allows a cache to verify that its objects are up to date called the conditional GET

- So the cache now also stores the last-modified date along with the object, then if another browser requests the same object via the cache, and the object is still in the cache, it performs an up-to-date check by issuing a conditional GET, specifically it sends:

GET /fruit/kiwi.gif HTTP/1.1

Host: www.exotiquecuisine.com

If-modified-since: Wed, 9 Sep 2015 09:23:24

The conditional GET is telling the server to send the object only if the object has been modified since the specified date, if has not been modified then the Web server sends a response message to the cache with empty entity body.

(more details)

The most common use case for conditional requests is updating a cache. With an empty cache, or without a cache, the requested resource is sent back with a status of 200 OK.

Together with the resource, the validators are sent in the headers. In this example, both Last-Modified and ETag are sent, but it could equally have been only one of them. These validators are cached with the resource (like all headers) and will be used to craft conditional requests, once the cache becomes stale.

As long as the cache is not stale, no requests are issued at all. But once it has become stale, this is mostly controlled by the Cache-Control header, the client does not use the cached value directly but issues a conditional request. The value of the validator is used as a parameter of the If-Modified-Since and If-None-Match headers.

If the resource has not changed, the server sends back a 304 Not Modified response. This makes the cache fresh again, and the client uses the cached resource. Although there is a response/request round-trip that consumes some resources, this is more efficient than to transmit the whole resource over the wire again.

if the resource has changed, the server just sends back a 200 OK response, with the new version of the resource (as through the request wasn’t conditional). The client uses this new resource (and caches it).

if the resource has changed, the server just sends back a 200 OK response, with the new version of the resource (as through the request wasn’t conditional). The client uses this new resource (and caches it).

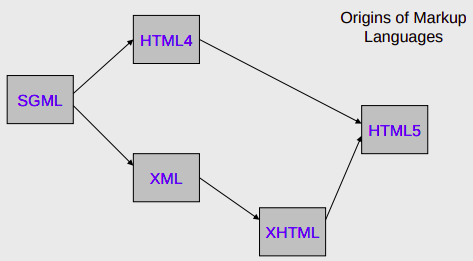

HTML

HTML is the standard markup language for documents designed to be displayed in a web browser. It defines the content and structure of web content. It is often assisted by technologies such as CSS and scripting languages such as JavaScript. Web browsers receive HTML documents from a web server or from local storage and render the documents into multimedia web pages. HTML describes the structure of a web page semantically and originally included cues for its appearance.

HTML documents can be delivered by the same means as any other computer file. However, they are often delivered by HTTP from a webserver or by email. Dynamic HTML (DHTML) is a term which was used by some browser vendors to describe the combination of HTML, style sheets, and client-side scripts that enabled the creation of interactive and animated documents.

DHTML allows scripting languages to change variables in a web page’s definition language, which in turn affect the look and function of otherwise static HTML page content after the page has been fully loaded and during the viewing process.

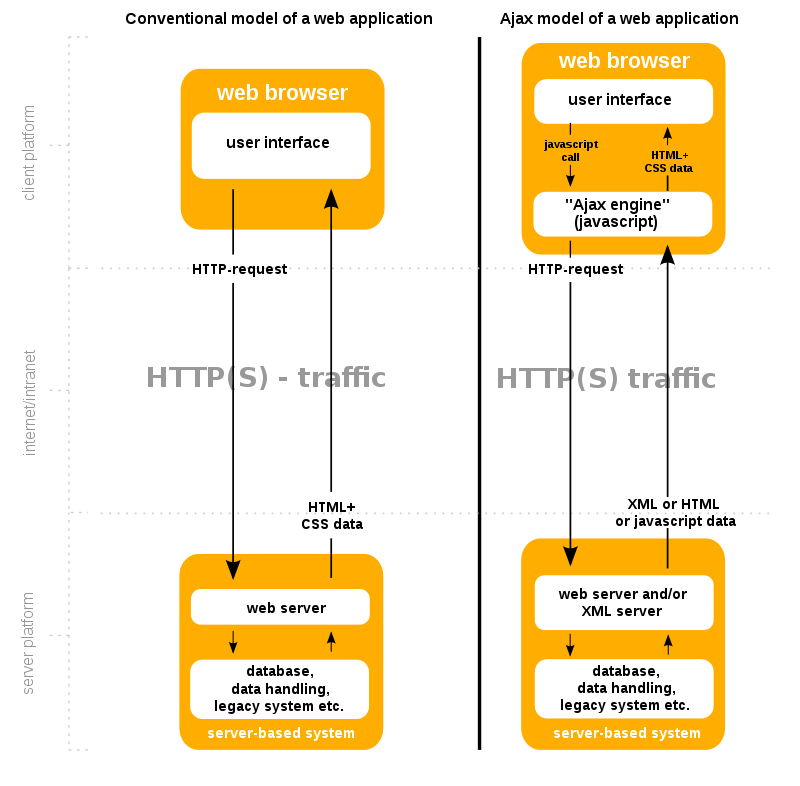

DHTML is the predecessor of Ajax and DHMTL pages are still request/reload-based. Under the DHTML model, there may not be any interaction between the client and server after the page is loaded; all processing happens on the client side. By contrast, Ajax extends features of DHTML to allow the page to initiate network requests (or subrequests) to server even after page load to perform additional actions. For example, if there are multiple tabs on a page, the pure DHTML approach would load the contents of all tabs and then dynamically display only the one that is active, while AJAX could load each tab only when it is really needed.

DHTML is not a technology in and of itself; rather, it is the product of three related and complementary technologies.

Typically a web page using DHTML is set up in the following way:

<!DOCTYPE html>

<html lang="en">

<head>

<meta charset="utf-8">

<title>DHTML example</title>

</head>

<body bgcolor="red">

<script>

function init() {

let myObj = document.getElementById("navigation");

// ... manipulate myObj

}

window.onload = init;

</script>

<!--

Often the code is stored in an external file; this is done

by linking the file that contains the JavaScript.

This is helpful when several pages use the same script:

-->

<script src="my-javascript.js"></script>

</body>

</html>DOM:

The DOM API is the foundation of DHTML, providing a structure interface that allows access and manipulation of virtually anything in the document. The HTML elements in the document are available as a hierarchical tree of individual objects, making it possible to examine and modify an element and its attributes by reading and setting properties and by calling methods. The text between elements is also available through DOM properties and methods.

In HTML DOM, every element is a node: a document is a document node, all HTML elements are element nodes, all HTML attributes are attribute nodes, text inserted into HTML elements are text nodes, comments are comment nodes.

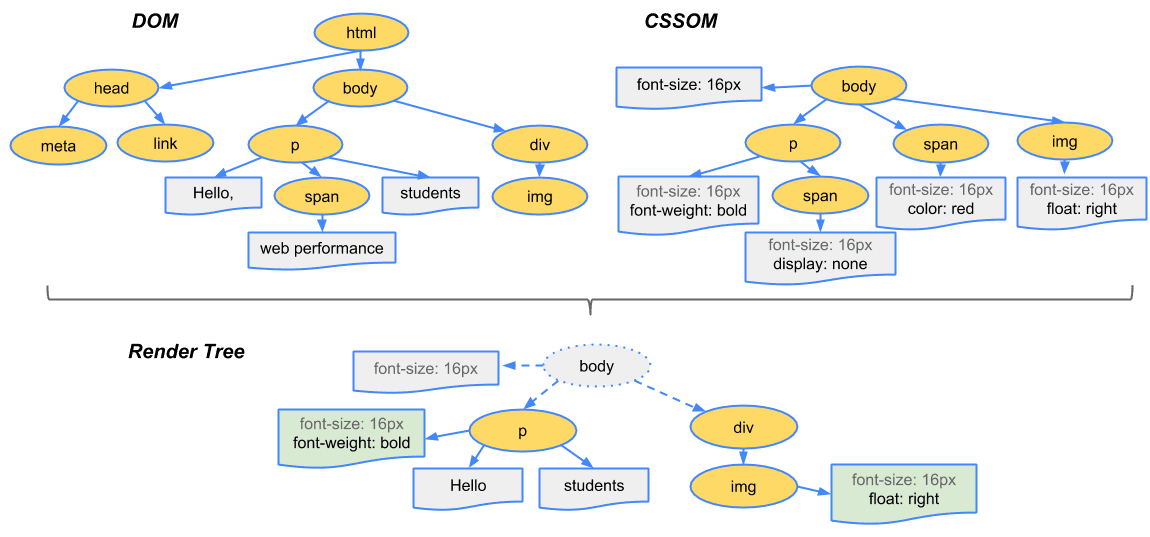

The CSSOM and DOM trees are merged into a render tree, which computes the layout of each visible element and serves as an input to the paint process that renders the pixels to the screen. Optimizing each of these steps is crucial to achieving optimal rendering performance.

To construct the render tree, the browser roughly does the following:

-

Starting at the root of the DOM tree, traverse each visible node

- Some nodes are not visible (for example, script tags, meta tags, and so on), and are omitted since they are not reflected in the rendered output

- Some nodes are hidden via CSS and are also omitted from the render tree; for example, the span node, is missing from the render tree because we have an explicit rule that sets the ‘display:none’ property on it.

-

For each visible node, find the appropriate matching CSSOM rules and apply them

-

Emit visible nodes with content and their computed styles.

At a glance:

Step 1: The browser parses the HTML file first, and that leads to the browser recognizing any link element references to external CSS stylesheets and any script element references to scripts

Step 2: As the browser parses the HTML, it sends request back to the server for any CSS files it has found from link elements, and any JS files it has found from script elements, and from those, then parses the CSS and JS.

Step 3: The browser generates an in-memory DOM tree from the parsed HTML, generates an in-memory CSSOM structure from the parsed CSS, and compiles and executes the parsed JS.

Step 4: As the browser builds the DOM tree and applies the styles from the CSSOM tree (render tree) and executes the JavaScript, a visual representation of the page is painted to the screen, and the user sees the page content and can begin to interact with it.

Let’s talk about Ajax, moving on from DHTML:

AJAX

Remember websites in the 90’s?. When you clicked anything, a new page would have to load to show the effect of your click even if it was nothing. That is the internet before AJAX. Now take a look at the very Web with buttons. That’s AJAX for you.

When the browsers send requests to servers for HTML files, those HTML files often contain link elements referencing external CSS stylesheets and script elements referencing external JS scripts.

The term Ajax has come to represent a broad group of Web technologies that can be used to implement a Web application that communicates with a server in the background, without interfering with the current state of the page.

- HTML (or XHMTL) and CSS for presentation

- The DOM for dynamic display of and interaction with data

- JSON or XML for the interchange of data, and XSLT for XML manipulation

- The XMLHttpRequest object for asynchronous communication

- Javascript to bring these technologies together.

Note: The XHR is a JS class containing methods to asynchronously transmit HTTP requests from a web browser to a web server. Fetch is a native JS API. Fetch makes it easier to make web requests and handle responses than the older XHR.

Ajax offers several benefits that can significantly enhance web application performance and user experience. By reducing traffic and improving speed, Ajax plays a crucial role in modern web development. One key advantage of Ajax is its capacity to render web applications without requiring data retrieval, resulting in reduced server traffic. This optimization minimizes response times on both the server and client sides, eliminating the need for users to endure loading screens.

Example of how AJAX works:

-

User Action. The user performs an action (like pressing an up arrow). This button is equipped with an event listener that detects the click.

-

Javascript Call: The event listener triggers a JS function that uses AJAX to send an asynchronous request to the sever. This is typically done using XMLHttpRequest object or the Fetch API.

-

Server Processing: The server receives the request to like the post with ID 12345. It processes this request, which might involve updating the database to record the new like, incrementing the like count for the post, and performing and other necessary logic.

-

Server Response: After processing the request, the server sends a response back to the client. This response might include a status message indicating the request was successful, the new total number of likes for the post, or an error message if something went wrong.

-

Client-side Processing: Once the response is received, the JavaScript code then processes this information. If the action was successful, the code updates the like button to reflect that the user has liked the post. This might involve changing the color of the like button to orange and updating the displayed like count next to the button.

In the absence of AJAX, interacting with a web page, such as liking a post, would require the entire page to reload every time the user performs an action. The user clicks the like button, the page sends a request to the server, then the entire page refreshes to display the updated content, leading to a noticeable interruption in the user experience.

Since we not building the entire page with every click, you can keep information about the site in the browser. This can be used throughout your entire visit and future visits. ReactJs is one of a javascript library for building and maintaining single page applications.

XML

Limitations of HTML:

- HTML is a fixed specification with a finite set of elements. It is not extendable, and as a result of this limitation, Web developers and software vendors have stretched the usefulness of HTML almost to a breaking point.

- Browser vendors such as Microsoft have added proprietary features and additional elements to their browsers based on demands for more functionality, but in so doing, they have compromised one of the most important benefits that HTML has to offer - portability.

- The limitations of HTML made it very clear that a new and better language was necessary for formulating Web documents. In addition, may companies were adding transactional functionality to their Web sites, such as allowing visitors to purchase items and services online.

- This marked a radical departure from the first generation of websites, which mainly provided static information that was easily stored as text. These new websites relied heavily on data gathered from different sources, such as databases, news feeds, and other Web sites

- The resulting language was the XML. XML is an extensive language because, unlike HTML, it allows users to define their own tags.

XML:

XML is a markup language and file format for storing, transmitting, and reconstructing arbitrary data. It defines a set of rules for encoding documents in a format that is both human-readable and machine-readable. The main purpose of XML is serialization, i.e storing, transmitting, and reconstructing arbitrary data. For two disparate systems to exchange information, they need to agree upon a file format. XML standardizes this process.

XML itself is not a language - it is a meta language. A meta language is a set of rules used for building markup languages. Structured languages can be developed that describe certain types of data rather than just the presentation of the data. Such structured languages include elements that describe documents containing information about an account, an item, a service, or a transaction. XHTML is an application of XML that is used for formatting Web documents. There are many other XML languages, some still under development, such as RSS (Really Simple Syndication), MathML, GraphML, MusicXML.

Benefits:

- It allows data to be self-describing, as opposed to being limited by a predefined set of elements.

- Lets you create custom data structures for industry-specific or company specific needs.

XHTML:

XHTML is a standard proposed by W3C that adapts HTML into an extensible concept by using XML. XML defines that data can be shared on the web. It is extensible because anyone can invent a new set of purposes such as describing the appearance of a web page. To enhance web pages, HTML was redesigned by using XML to form XHTML. XHTML is portable to enable small devices to support embedded programming. XHTML brings different programming practices. It has strict code rules such as symmetrical form, use lowercase, enclose elements with quotes and end tag with a forward slash at the end of the element and before the closing angle bracket.

TCP and IP

Network layer can be decomposed into two interacting parts, the data plane and the control plane

The data plane function of the network layer - the per router functions in the network layer that determine how a datagram arrive on one of a router’s input links is forwarded to one of that router’s output links.

The control plane functions of the network layer - the network wide logic that controls how a datagram is routed among routers along an end-to-end path from the source host to the destination host.

Traditionally the control plane routings and data-plane forwarding have been implemented together monolithicallys, within a router.

Example:

Lets suppose H1 host is sending information to H2 and several routers on the path between H1 and H2. The network layer in H1 takes segments from the transport layer in H1, encapsulates each segment into a datagram, and then sends the datagrams to its nearby router, R1. At the receiving host, H2 the network layer receives the datagrams from its nearby router R2, extracts the transport layer segments, and delivers the segments up to the transport layer at H2.

The primary dataplane role of each router is to forward datagrams from its input links to its output links; primary role of the network control plane is to coordinate these local, per-router forwarding actions so that datagrams are ultimately transferred end-to-end, along paths of routers between source and destination hosts

Forwarding When a packet arrives at a router’s input link, the router must move the packet to appropriate output link. A packet might also be blocked from exiting a router or might be duplicated and sent over multiple outgoing links. Forwarding refers to the router-local action of transfering a packet from an input link interface to the appropriate output link interface, takes place at very short timescales, and thus is typically implemented in hardware.

Routing The network layer must determine the route or path taken by packets as they flow from sender to receiver. A routing algorithm would determine, the path along which packets flow from H1 to H2. Routing refers to network wide process that determines the end-to-end paths that packets take from source to destination, takes place on much longer timescales and is implemented in software

How routing and forwarding are related? Note: A key element in every network router is its forwarding table, a router forwards a packet by examining the value of one or more fields in the arriving packet’s header and then use these header values to index into its forwarding table. The value stored in the forwarding table entry for those values indicates the outgoing link interface at that router to which that packet is to be forwarded. The routing algorithms determines the contents of the routers’ forwarding tables. The routing algorithm function in one router communicates with the routing algorithm function in other routers to compute the values for its forwarding table. A technically feasible case is in which network forwarding tables are configured directly by human network operators physically present at the routers, no routing protocols would be required.

Couldn’t the human approach be modified to be efficient? The approach to implementing routing functionality with each router having a routing component that communicates with the routing component of other routers -has been traditional approach adopted by routing routers. Observation that humans could configure forwarding tables suggest that there are others ways for control-plane functionality to determine the contents of data-plane forwarding tables. An alternate approach is where a physically separate from the routers, remote controller computes and distributes the forwarding tables to be used by each and every router. Remote controller might be implemented in a remote data center with high reliability and redundancy, and might be managed by the ISP or some third party

Network service model

Some possible services that the network layer could provide:

- Guaranteed delivery: The service guarantees that a packet sent by a source host will eventually arrive at the destination host.

- Guaranteed delivery with bounded delay: Not only guarantees delivery of the packet but delivery within a specified host to host delay bound

- In order packet delivery: Guarantees that packets arrive at the destination in the order that they were sent

- Guaranteed minimal bandwidth: Network layer emulates the behavior of a transmission link of a specified bit rate between sending and receiving hosts

- Security: Encrypt datagrams and decrypt them at destination

The Internet’s network layer provides a single service, known as best-effort service, with which, packets are neither guaranteed to be received in the order in which they were sent, nor is their eventual delivery guaranteed. Might appear that best-effort service is a euphemism for no service at all - a network that delivered no packets to the destination would satisfy the definition of best-effort delivery service. For example, the ATM network architecture provides for guaranteed in-order delay, bounded delay, and guaranteed minimal bandwidth.

IPv4 protocol

Two versions of IP in use today.

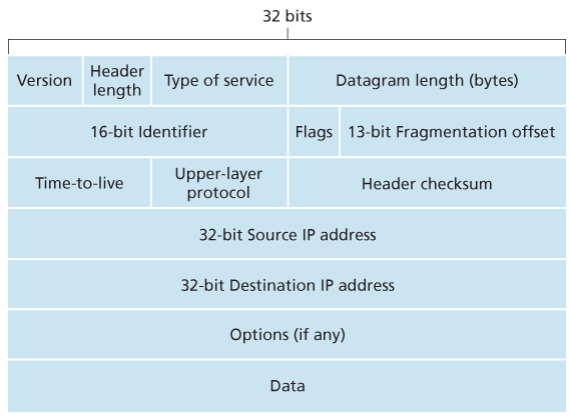

Key fields of IPv4 packet, referred to as a datagram:

- Version number: These 4 bits specify the IP protocol version of the datagram. By looking at version can determine how to interpret remainder of the IP datagram.

- Header length: Because IPv4 datagram can contain a variable number of options, these 4 bits are needed to determine where in the IP datagram the layer i.e. the transport layer segment being encapsulated actually begins.

- Type of service: Allows different types of IP datagrams to be distinguished from each other. For example it might be useful to distinguish real-time datagrams from non-real traffic.

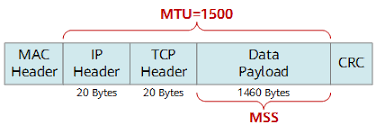

- Datagram length: This is the total length of the IP datagram (header plus data), measured in bytes. Since this field is 16 bits long, the theoretical maximum size of the IP datagram is 65,535 bytes. However, datagrams are rarely larger than 1,500 bytes, which allows an IP datagram to fit in the payload field of a maximally sized Ethernet frame.

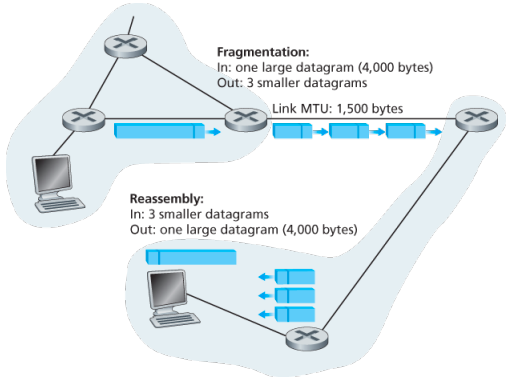

- Identifier, flags, 13-bit fragmentation offset: Have to do with so called IP fragmentation. (later)

- Time-to-live: The TTL field is to ensure that datagrams do not circulate forever, due to, for example, a long lived routing loop in the network. It is decremented by one each time the datagram is processed by a router, if the TTL reaches 0, a router must drop the datagram.

- Protocol: Typically used when an IP datagram reaches its final destination. The value of this field indicates the specific transport-layer protocol to which the data portion of this IP datagram should be passed. For example, a value of 6 indicates that the data portion is passed to TCP, while a value of 17 indicates that the data is passed to UDP.

- Header checksum: Aids a route in detecting bit errors in a received IP datagram. The header is computed by treating each 2 bytes in the header as a number and summing these numbers using 1s complement arithmetic, typically discard when there is checksum error, note that the checksum must be recomputed and stored again at each router, since the TTL filed, and possibility the options field as well, will change.

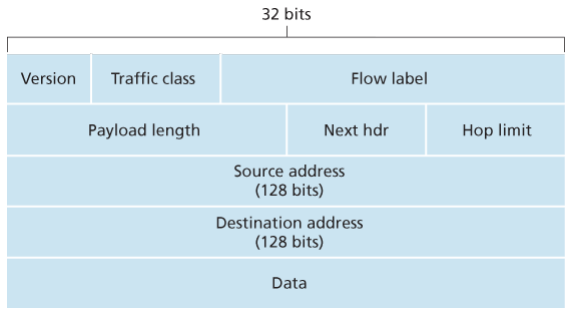

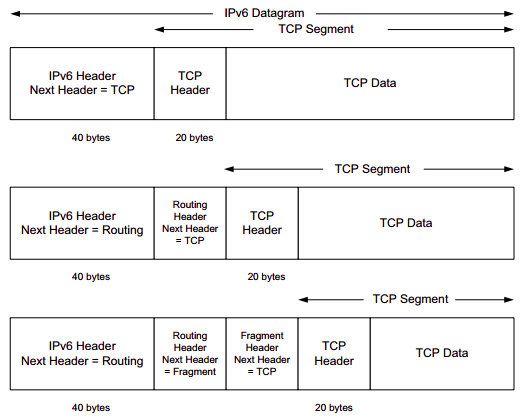

Why checksum at both TCP and IP? Only header is checksum-ed in IP while whole segment is at TCP, TCP/UDP and IP do not necessairly both have to belong to same protocol stack, TCP can run over a different network layer protocl for example ATM