Networks under attack: 1. The bad guys can put malware into your host via the internet -Along with all the good stuffs comes malicious stuff collectively known as malware that can also enter and infect our devices -Our compromised host may also be enrolled in a network of thousands of similarly compromised deices, collectively known as a botnet

2. The bad guys can attack servers and network infrastructure

-a DoS attack renders a network, host, or other piece of infrastructure unusable by legitimate users

-Most internet DoS falls into one of three categories:

>Vulnerability attack:

-involves sending a few well-crafted messages to a vulnerable application or OS running on a targeted host, if right sequence of packets is sent to a vulnerable application or OS, the service can stop or, worse, the host can crash

>Connection flooding:

-establishes a large number of half-open or fully open TCP connections at the target host, so host becomes so bogged down with the bogus connection that it stops accepting legitimate connections

>Bandwidth flooding:

-sends a deluge of packets to the targeted host -so many packets that the target's access link becomes clogged, preventing legitimate packets from reaching the server

-if server has an access rate of R bps, then the attacker will need to send traffic at a rate of approximately R bps to cause damage, if R is very large, a single attack source may not be able to generate enough traffic to harm the server

-in DDoS attack, the attacker controls multiple sources and has each source blash traffic at the target

3. The bad guys can sniff packets:

-Passive receiver in the vicinity of the wireless transmitter can obtain a copy of every packet that is tranmitted can contain all kinds of sensitive information, including passwords, social security numbers, trade secrets and private peronsal messages

-Sniffer can be deployed in wired envrionment as well, a packet sniffer can obtain copies of broadcast packets sent over the LAN, cable access technologies broadcast packets and are thus vulnerable to sniffing, a bady guy who gains access to an institution's access router or access link to the Internet may be able to plant a sniffer that makes a copy of every packet going to/from the organization

4. The bad guys can masquerade as someone you trust:

-Suprisingly easy to create a packet with an arbitrary source address, packet content, and destination address and then transmit this hand-crafted packet into the Internet, which will dutifully forward the packet to its destinatino

-Ability to inject packets into the Internet with a false source address is known as IP spoffing, and is but one of many ways in which one user can masquerade as another user

-Need end-point authentication, a mechanism that will allow us to determine with certaintly if a message originates from where we think it does

Network security problems can be divided roughly into four closely interwined areas:

- Secrecy, also called confidentiality, has to do with keeping information out of the grubby little hands of unauthorized users.

- Authentication deals with determining whom you are talking to before revealing sensitive informatin or entering into business deal.

- Nonrepudiation deals with signatures: how do you prove that your customer really placed an electronic order for 89 cents when he later claims the price was 69 cents?

- Integrity has to do with ho you can be sure that a message you received was really the one sent and not something that a malicious adversary modified in transit.

Before getting into the solutions themselves, it is worth spending a few moments considering where in the protocol stack network security belongs. There is probably no one single place. Every layer has something to contribute. In the physical layer, wiretapping can be foiled by enclosing transmission lines (or better yet, optical fibers) in sealed tubes containing an inert gas at high pressure. Any attempt to drill into a tube will release some gas, reducing the pressure and triggering an alarm.

In the data link layer, packets on a point-to-point line can be encrypted as they leave one machine and decrypted as they enter another. All the details can be handled in the data link layer, with higher layers oblivious to what is going on.

In the network layer, firewalls can be installed to keep good packets and bad packets out. IP security also functions in this layer.

In the transport layer, entire connections can be encrypted end to end, that is, process to process. For maximum security, end-to-end security is required.

Finally, issues such as user authentication and nonrepudiation can only be handled in the application layer.

Requirements

Computer network security encompasses various fundamental requirements to protect digital assets and ensure the overall well-being of an organization’s information technology infrastructure. These requirements can be summarized in terms of three key principles: confidentiality, integrity, and availability.

-

Confidentiality is about safeguarding sensitive information from unauthorized access or disclosure. This necessitates the implementation of robust access control mechanisms, which ensure that only authorized users can access critical data and network resources. Utilizing strong authentication methods, such as multi-factor authentication (MFA) and role-based access control (RBAC), plays a pivotal role in achieving this. Furthermore, data encryption should be employed to protect data both in transit and at rest. By encrypting data using protocols like SSL/TLS for web traffic and VPNs for remote access, organizations can ensure that even if unauthorized individuals gain access to it, they won’t be able to decipher its content.

-

Integrity assures that data and network resources remain accurate and unaltered. This is achieved through the implementation of data validation checks, checksums, and digital signatures. These measures help detect any unauthorized alterations to data, ensuring its integrity is maintained. Robust change management procedures are equally essential; they monitor and control any modifications made to network configurations, software, or hardware to prevent unauthorized changes that may introduce vulnerabilities. Additionally, comprehensive logging and auditing practices are vital for monitoring network activities and detecting anomalies that could compromise data integrity.

-

Availability ensures that network resources and services are accessible when needed. Redundancy, in the form of backup servers, routers, and internet connections, can mitigate the impact of hardware failures or network outages. Load balancing and failover mechanisms are commonly used to ensure seamless service availability. Protection against Distributed Denial of Service (DDoS) attacks is critical, and organizations should deploy DDoS mitigation solutions to filter out malicious traffic and maintain service availability. Furthermore, disaster recovery plans should be developed and regularly tested to ensure that data and services can be restored quickly in case of unexpected incidents.

Collectively, these requirements form a comprehensive framework for ensuring the security and resilience of an organization’s computer network.

Cryptography

Cryptography is a field of study and practice within computer science and mathematics that focuses on securing information by converting it into an unintelligible form, known as ciphertext, through the use of mathematical algorithms and keys.

The messages to be encrypted, known as the plaintext, are transformed by a function that is parameterized by a key. The output of the encryption process, known as the ciphertext, is then transmitted, often by messenger or radio. We assume that the enemy, or intruder, hears and accurately copies down the complete ciphertext. However, unlike the intended recipient, he does not know what the decryption key is and so cannot decrypt the ciphertext easily.

We will use to mean that the encryption of the plaintext using key gives the ciphertext . Similarly, represents the decryption of to get the plaintext again. It then follows that Our basic model is a stable and publicly known general method parameterized by a secret and easily changed key. The idea that the cryptanalyst knows the algorithms and that the secrecy lies exclusively in the keys is called Kerckhoff’s principle, that says all algorithms must be public; only keys are secret.

Symmetric-key algorithms

Modern cryptography uses the same basic ideas as traditional cryptography (transposition and substitution), but its emphasis is different. Traditionally, cryptographers have used simple algorithms. Nowadays, the reverse is true: the object is to make the encryption algorithm so complex and involuted that even if the cryptanalyst acquires vast mounds of enciphered text of his own choosing, he will not be able to make any sense of it at all without the key.

Symmetric-key cryptography refers to encryption methods in which both the sender and receiver share the same key or, less commonly, in which their keys are different, but related in an easily computable way. In particular, we will focus on block ciphers, which take an n-bit block of plaintext as input and transform it using the key into an n-bit block of ciphertext.

Cryptographic algorithms can be implemented in either hardware (for speed) or software (for flexibility).

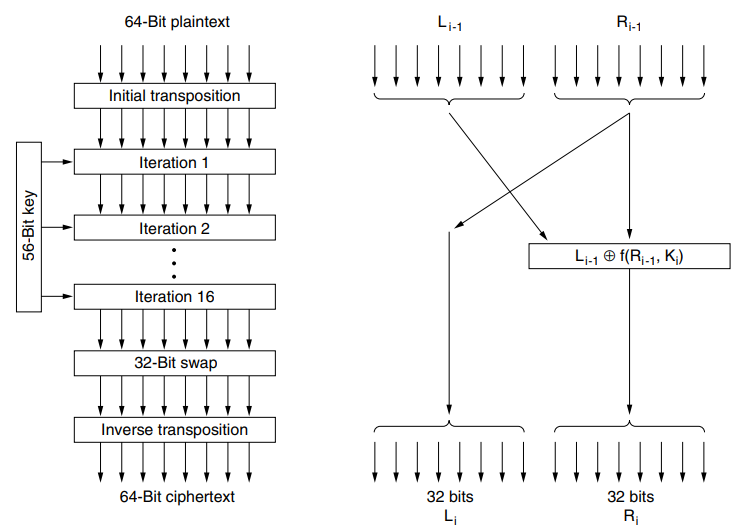

Plaintext is encrypted in blocks of 64 bits, yielding 64 bits of ciphertext. The algorithm, which is parameterized by a 56-bit key, has 19 distinct stages.

The first stage is a key-independent transposition on the 64-bit plaintext. The last stage is the exact inverse of this transposition.

The stage prior to the last one exchanges the leftmost 32 bits with the rightmost 32 bits.

The remaining 16 stages are functionally identical but are parameterized by different functions of the key.

The algorithm has been designed to allow decryption to be done with the same key as encryption, a property needed in any symmetric-key algorithm. The steps are just run in the reverse order.

Each stage takes two 32-bit inputs and produces two 32-bit outputs. The left output is imply a copy of the right input. The right output is the bitwise XOR of the left input and a function of the right input and the key for this stage, . Pretty much all the complexity of the algorithm lies in this function.

(S-box ma laijani rey ani P-box ma rey) A different 48-bit subset of the 56 bits is extracted and permuted on each round.

Triple DES: uses 2 or 3 keys, 3 executions of DES algorithm. Effective key length if 112 or 168 bits.

Rijndael is a family of ciphers with different key and block sizes. For AES, NIST selected three members of the Rijndael family, each with a block size of 128 bits, but three different key lengths: 128, 192 and 256 bits. The algorithm described by AES is a symmetric key algorithm meaning the same key is used for both encrypting and decrypting the data.

Rijindael is based on Galois filed theory, which gives it some provable security properties. Like DES, Rijndael uses substitution and permutations, and it also uses multiple rounds. The number of rounds depends on the key size and block size, being 10 for 128-bit keys with 128-bit blocks and moving up to 14 for the largest key or the largest block.

However, unlike DES, all operations involve entire bytes, to allow for efficient implementations in both hardware and software.

During the calculation, the current state of the data is maintained in a byte array, state, whose size is NROWS × NCOLS. For 128-bit blocks, this array is 4 × 4 bytes.

The code starts out by expanding the key into 11 arrays of the same size as the state. Suffice it to say that the round keys are produced by repeated rotation and XORing of various groups of key bits.

There is one more step before the main computation begins: is XORed into state, byte for byte. In other words, each of the 16 bytes in state is replaced by the XOR of itself and the corresponding byte in .

Public-key algorithms

No matter how strong a cryptosystem was, if an intruder could steal the key, the system was worthless.

Public-key cryptography is the field of cryptographic systems that use pairs of related keys. Each key pair consists of a public key and a corresponding private key. Key pairs are generated with cryptographic algorithms based on mathematical problems termed one-way functions. Security of public-key cryptography depends on keeping the private key secret; the public key can easily be openly distributed without compromising security. In a public-key encryption system, anyone with a public key can encrypt a message, yielding a ciphertext, but only those who know the corresponding private key can decrypt the ciphertext to obtain the original message.

The requirement for the keyed encryption algorithm, , and the keyed decryption algorithm, , meets following requirements:

- It is exeedingly difficult to deduce from .

- cannot be broken by a chosen plaintext attack.

It is known by the initials of the three discoverers (Rivest, Shamir, Adleman): RSA. It has survived all attempts to break it for more than 30 years and is considered very strong. Much practical security is based on it. For this reason, Rivest, Shamir, and Adleman were given the 2002 ACM Turing Award. Its major disadvantage is that it requires keys of at least 1024 bits for good security (versus 128 bits for symmetric-key algorithms), which makes it quite slow.

RSA ALGORITHM YAAD XA NI DOXT?

Digital Signatures

The authenticity of many legal, financial, and other documents is determined by the presence or absence of an authorized handwritten signature. And photocopies do not count.

For computerized message systems to replace the physical transport of paper-and-ink documents, a method must be found to allow documents to be signed in an unforgeable way.

Basically, what is needed is a system by which one party can send a signed message to another party in such a way that the following conditions hold:

- The receiver can verify the claimed identity of the sender.

- The sender cannot later repudiate the contents of the message.

- The receiver cannot possibly have concocted the message himself.

bhannako matlab sender le aba sign garera pathayesi feri maile gareko haina bhanna payena, receiver le chai fake garauna payena.

The first requirement is needed, for example, in financial systems. When a customer’s computer orders a bank’s computer to buy a ton of gold, the bank’s computer needs to be able to make sure that the computer giving the order really belongs to the customer whose account is to be debited. In other words, the bank has to authenticate the customer (and the customer has to authenticate the bank).

The second requirement is needed to protect the bank against fraud. Suppose that the bank buys the ton of gold, and immediately thereafter the price of gold drops sharply. A dishonest customer might then proceed to sue the bank, claiming that he never issued any order to buy gold. The property that no party to a contract can later deny having signed it is called nonrepudiation. The digital signature schemes that we will now study help provide it.

The third requirement is needed to protect the customer in the event that the price of gold shoots up and the bank tries to construct a signed message in which the customer asked for one bar of gold instead of one ton.

… You remember big brother and encrypting with your own private key

Communication security: Firewalls, IPSec, Wireless

Firewalls

In addition to the danger of information leaking out, there is also a danger of information leaking in. In particular, viruses, worms, and other digital pests can breach security, destroy valuable data, and waste large amounts of administrators’ time trying to clean up the mess they leave. Often they are imported by careless employees who want to play some nifty new game.

Firewalls are just a modern adaptation of that old medieval security standby: digging a deep moat around your castle. This design forced everyone entering or leaving the castle to pass over a single drawbridge, where they could be inspected by the I/O police.

With networks, the same trick is possible: a company can have many LANs connected in arbitrary ways, but all traffic to or from the company is forced through an electronic drawbridge (firewall). No other route exists.

The firewall acts as a packet filter. It inspects each and every incoming and outgoing packet. Packets meeting some criterion described in rules formulated by the network administrator are forwarded normally. Those that fail the test are unceremoniously dropped.

The filtering criterion is typically given as rules or tables that list sources and destinations that are acceptable, sources and destinations that are blocked, and default rules about what to do with packets coming from or going to other machines. In the common case of a TCP/IP setting, a source or destination might consist of an IP address and a port.

Ports indicate which service is desired. For example, TCP port 25 is for mail, and TCP port 80 is for HTTP. Some ports can simply be blocked. For example, a company could block incoming packets for all IP addresses combined with TCP port 79. It was once popular for the Finger service to look up people’s email addresses but is little used today.

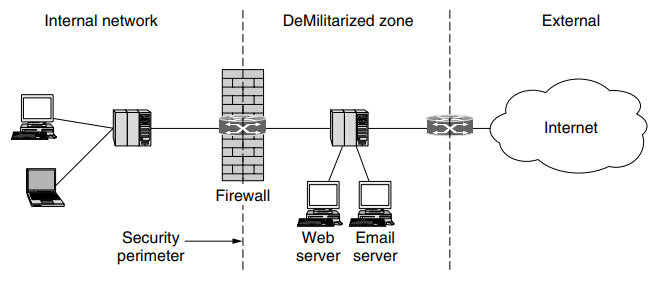

The difficulty is that network administrators want security but cannot cut off communication with the outside world. That arrangement would be much simpler and better for security, but there would be no end to user complaints about it. This is where arrangements such as the DMZ (DeMilitarized Zone) come in handy.

The DMZ is the part of the company network that lies outside of the security perimeter. Anything goes here. By placing a machine such as a Web server in the DMZ, computers on the Internet can contact it to browse the company Web site. Now the firewall can be configured to block incoming TCP traffic to port 80 so that computers on the Internet cannot use this port to attack computers on the internal network.

Originally, firewalls applied a rule set independently for each packet, but it proved difficult to write rules that allowed useful functionality but blocked all unwanted traffic. Stateful firewalls map packets to connections and use TCP/IP header fields to keep track of connections. This allows for rules that, for example, allow an external Web server to send packets to an internal host, but only if the internal host first establishes a connection with the external Web server. Such a rule is not possible with stateless designs that must either pass or drop all packets from the external Web server.

Another level of sophistication up from stateful processing is for the firewall to implement application-level gateways. This processing involves the firewall looking inside packets, beyond even the TCP header, to see what the application is doing. With this capability, it is possible to distinguish HTTP traffic used for Web browsing from HTTP traffic used for peer-to-peer file sharing.

As the above discussion should make clear, firewalls violate the standard layering of protocols. They are network layer devices, but they peek at the transport and applications layers to do their filtering.

Virtual Private Networks

Many companies have offices and plants scattered over many cities, some-times over multiple countries. In the olden days, before public data networks, it was common for such companies to lease lines from the telephone company between some or all pairs of locations. Some companies still do this. A network built up from company computers and leased telephone lines is called a private network.

Private networks work fine and are very secure. If the only lines available are the leased lines, no traffic can leak out of company locations and intruders have to physically wiretap the lines to break in, which is not easy to do. The problem with private networks is that leasing a dedicated T1 line between two points costs thousands of dollars a month, and T3 lines are many times more expensive. When public data networks and later the Internet appeared, many companies wanted to move their data (and possibly voice) traffic to the public network, but without giving up the security of the private network.

This demand soon led to the invention of VPNs (Virtual Private Networks), which are overlay networks on top of public networks but with most of the properties of private networks.

One popular approach is to build VPNs directly over the Internet. A common design is to equip each office with a firewall and create tunnels through the Internet between all pairs of offices. A further advantage of using the Internet for connectivity is that the tunnels can be set up on demand to include, for example, the computer of an employee who is at home or traveling as long as the person has an Internet connection.

This flexibility is much greater then is provided with leased lines, yet from the perspective of the computers on the VPN, the topology looks just like the private network case.

IPSec VPN

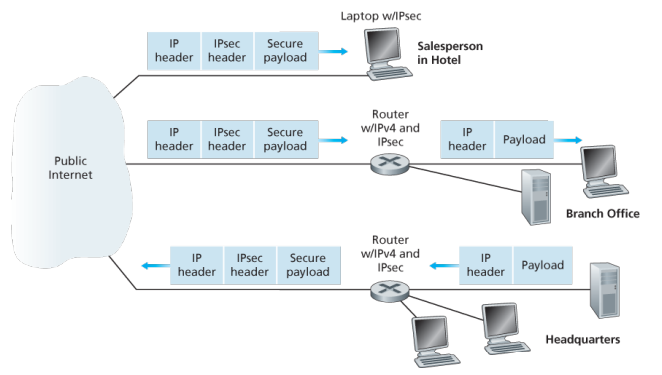

To get a feel for how a VPN works. When a host in headquarters sends an IP datagram to a salesperson in a hotel, the gateway router in headquaters converts the vaniall IPv4 datagram into an IPsec datagram and then forwards this IPsec datagram into the internet. The payload of the IPsec datagram includes an IPsec header, which is used for IPsec processing; furthermore, the payload of the IPsec datagram is encrypted. When the IPsec datagram arrives at the salesperson’s laptop, the OS in the laptop decrypts the payload (and provides other security services, such as verifying data integrity) and passes the unencrypted payload to the upper-layer protocol (for example, to TCP or UDP).

In IPsec protocol suite, there are two principal protocols: the Authentication Header (AH) protocol and Encapsulation Security Payload (ESP) protocol. When a source IPsec entity (typically a host or a router) sends secure datagrams to a destination entity (also a host or a router), it does so with either the AH protocol or the ESP protocol. The AH protocol provides source authentication and data integrity but does not provide confidentiality. The ESP protocol provides source authentication, data integrity, and confidentiality. Because confidentiality is often critical for VPNs and other IPsec applications, the ESP protocol is much more widely used than the AH protocol.

IPsec datagrams are sent between pairs of network entities, such as between two hosts, between two routers, or between a host and router. Before sending IPsec datagrams from source entity to destination entity, the source and destination entities create a network-layer logical connection. This logical connection is called a security association (SA). An SA is a simplex logical connection; that is, it is unidirectional from source to destination. If both entities want to send secure datagrams to each other, then two SAs (that is, two logical connections) need to be established, one in each direction

When the system is brought up, each pair of firewalls has to negotiate the parameters of its SA, including the services, modes, algorithms, and keys. If IPsec is used for the tunneling, it is possible to aggregate all traffic between any two pairs of offices onto a single authenticated, encrypted SA, thus providing integrity control, secrecy, and even considerable immunity to traffic analysis.

To a router within the Internet, a packet traveling along a VPN tunnel is just an ordinary packet. The only thing unusual about it is the presence of the IPsec header after the IP header, but since these extra headers have no effect on the forwarding process, the routers do not care about this extra header.

When two of the instituion’s hosts communicate over a path that traverses the public Internet, the traffic is encrypted before it enters the Intenret. IPSec datagrams are sent between pairs of network entities, such as between two hosts, between two routera, or between a host and router. Before sending IPsec datagrams from source entity to destination entity, the source and destination entities create a network-layer logical connection. This logical connection is called security associations (SA). An SA is a simplex logical connection; that is, it is unidrectional from source to destinoation.

This institution consists of a headquarters office, a branch office and, say, n traveling salespersons. There are 2+2n SAs.

Web Security: SSL

When the Web burst into public view, it was initially used for just distributing static pages. However, before long, some companies got the idea of using it for financial transactions, such as purchasing merchandise by credit card, online banking, and electronic stock trading. These applications created a demand for secure connections.

In 1995, Netscape Communications Corp., the then-dominant browser vendor, responded by introducing a security package called SSL (Secure Sockets Layer) to meet this demand. This software and its protocol are now widely used, for example, by Firefox, Safari, and Internet Explorer.

SSL builds a secure connection between two sockets, including

- Parameter negotiation between client and server.

- Authentication of the server by the client.

- Secret communication.

- Data integrity protection.

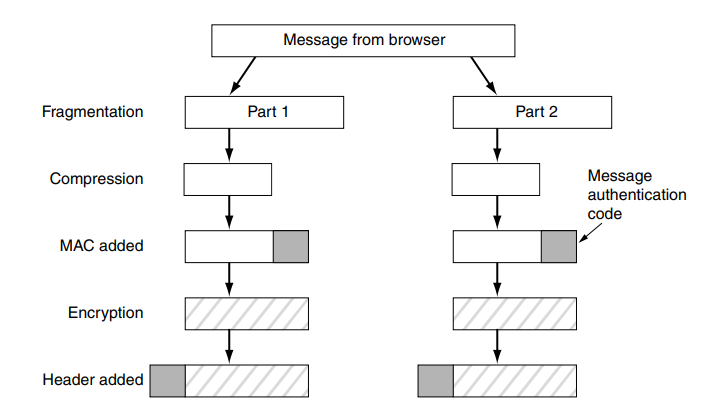

Effectively it is a new layer interposed between the application layer and the transport layer, accepting requests from the browser and sending them down to the TCP for transmission to the server. Once the secure connection has been established, SSL’s main job is handling compression and encryption. When HTTP is used over SSL, it is called HTTPS (Secure HTTP), even though it is the standard HTTP protocol. Sometimes it is available at a new port (443) instead of port 80.

As an aside, SSL is not restricted to Web browsers, but that is its most common application. It can also provide mutual authentication

SSL supports a variety of different options. These options include the presence or absence of compression, the cryptographic algorithms to be used, and some matters relating to export restrictions on cryptography

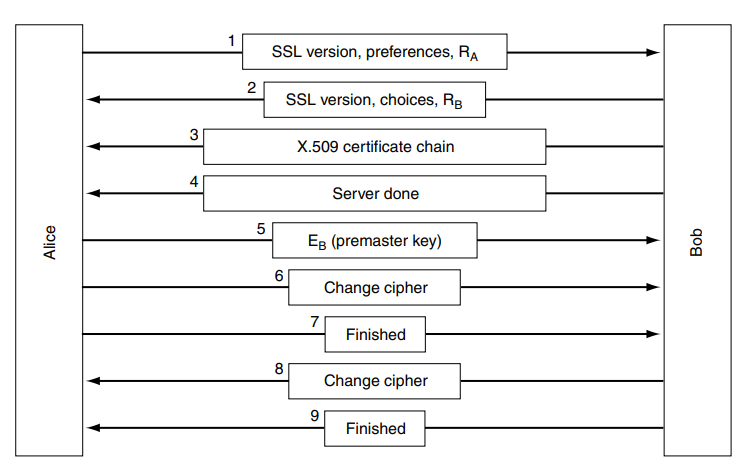

SSL consists of two subprotocols, one for establishing a secure connection and one for using it. Let us start out by seeing how secure connections are established.

SSL supports multiple cryptographic algorithms. The strongest one uses triple DES with three separate keys for encryption and SHA-1 for message integrity.

- The client sends a list of cryptographic algorithms it suports, along with a client nonce.

- From the list, the server chooses a symmetric algorithm, for example, AES, a public key algorithm, and a MAC algorithm. It sends back to the clients its choices, as well as a certificate and a server nonce.

- The client verifies the certificate, extracts the server’s public key, generates a Pre-Master Secret (PMS), encrypts the PMS with the server’s public key, and sends the encrypted PMS to the server.

- Using the same key derivaiton function as specified by the SSL standard, the client and server indepednetly compute the Master Secret (MS) from the PMS and nonces. the MS is then sliced up to generate the two encryption and two MAC keys. Furthermore, when the chosen symmteric cipher employs CBC such as 3DES or AES, then two initlaiztiaon vectors IVs one for each side of the connection - are also obtained from the MS.