(REREAD THREE EASY PIECES AND MAKE NOTES AGAIN)

Background: there are like fork() and work() to create multiple processes but we dont usually do that in programming and leave it to OS only Instead we focus on threads There are API’s like pthread_create and pthread_join to do stuffs with an abstraction called threads -Create a pthread_t object -Create the actual thread by passing the object to pthread_create() in main function -Wait for it if you want (pthread_join waits for the thread to finish and handshakes them and put them together or something) Threads achieve actual parallelism (multiple processors) or avoid losing time on IO operations (processes could do it too but they are heavy context loaded) Whose problem is threads not being synchronized ie accessing a memory at the same time and so ons. Threads do what they do (see the hardware) so if you want them to behave you need to make them behave To provide exclusion in critical sections, there are locks API’s to ensure atomicity, they are implemented by OS with help of hardware Some programming concepts here like creating thread, joining it, and so on using pthread library How those locks are implemented: (metrics: correctness, fairness, performance) 1. In single processors: lock function: disable interrups every kind so no interrupts and the thread can continue -correctly works though but not fair -putting too much trust in application by disabling interrupts -losing interrupts if disabled for long time -not for multiprocessors -inefficient -ONLY USED BY OS ITSELF

2. Try again with simple variable method (such are called spin locks)

Simple variable to indicate whether any thread is under the function or not

lockStrucuter {some flag variable; 0 means available, 1 means not}

lockFunction{ while flag is 1, wait; else set it to 1 }

unlockFunction{ set it to 0 }

>Still haunted by interrupts

-Say a thread is checking flag and is about to enter the code; but is late to set the flag as unavailable (as they are two instructions)

-Then another comes along and enters so both are inside the code at the same time

3. Hardware support:

One instruction: testAndSet(old pointer, new value):

Put new value into this old pointer and return the old value (atomotically)

[More like store and return]

lockStrucuter {some flag variable; 0 means available, 1 means not}

lockFunction{ while testAndSet(flag, 1) == 1 wait}

unlockFunction{ set it to 0 }

Metrics:

Correctness: works

Fairness: no guarantee (may lead to starvation)

Performance: painful overheads in single processors, if multiple then good only if number of processor equals number of threads

-if a thread which has got lock is interrupted (timer) in critical section then others cannot access that section unless that same thread is scheduled again to release the lock (deteriotes as number of threads increase)

-I mean this thread has lock got interrupted (holds the lock still), then others will be scheduled again but they just spin like idiots

Alternative: compareAndSwap(old pointer, expected value, new value):

If old pointer value equals that of expected then swap old with new else do nothing and return actual value

lockFunction{ while CompareAndSwap(flag, 0, 1) == 1 then wait}

4. Solution: Just yield (OS API) yourself when you enter the lock and its unavailable giving other people chances (still inefficient)

There are three states of threads (running, ready and blocked) yielding puts from running to ready

5. Using queues: need some control over which thread next gets to acquire the lock after the current holder releases it

lockStrucuter {flag; guard; queue pointer}; guard is a lock to protect the body of lock and unlock functions

[both flag and guard initialized as available]

lockFunction:

while testAndSet(guard, 1) == 1: (note guard not flag) //acquire guard lock by spinning

if (flag available)

flag = unavaialble // get it

guard = available

else:

add the thread to queue (with its id API agian)

guard = available

park();

unlockFunction:

while testAndSet(guard, 1) == 1:

if queue is emptry: //let go of lock, no one wants it

flag = available

else:

unpark (queue remove) //flag directly passsed to the woken up thread

//when a thread is woken up it will be as if it is returing from park()

guard = 0

Problem: When a thread is about to be parked (not complete) in hope that i will wake up when lock is free then suddently thread with lock is scheduled

then this thread will free the lock, meanwhile the subsequent park by that earlier thread sleeps for a very long time (potentially forever) because the unpark() shcedules the thread that are sleeping and not ready ones

solution is to have a setpark() API which indicates that the thread is about to be parked

then, if the about-to-park thread is interrupted before parking then its park() returns immediately instead of sleeping

NOTE: Something around that nature is the linux;s futex

Protect your data structures from thread danger: -With data of that class, put a lock as well -Acquire the lock when calling a method that manipulates the data strucutre and rlease when reutring from the call -SLOW AS FUCK

Conditional variables: (besides lock another requirement for programming)

void *child (void *arg)

printf("child");

done = 1 //need to send message to parrent waiting for us

return NULL;

int main()

pthread_t c;

pthread_create;

while (done == 0)

//spin //wait until the message arrives (slow cause of spinning)

return 0

-Condition variable is explicitly a queue where thread can put themselves on while state of execution is not as desired

-Some other thread, when it changes said state, can then wake one or more of those waiting threads and thus allow them to continue by signalling them

-wait(mutex, cond variable) assumes that mutex is held lock, releases the lock and put the calling thread to sleep (atomically)

when the thread wakes up (after some other thread has signaled it) it must re-acquire the lock before returing to the caller

-signal wakes one of the threads that are sleeping on the conditional queue (if more are sleeping then depends on queues) ie puts from sleeping to ready state

CODE PART:

include

int done = 0; pthread_mutex_t m = PTHREAD_MUTEX_INITIALIZER; pthread_cond_t c = PTHREAD_COND_INITIALIZER;

void* child(void *arg) { std::cout << “child is here” << std::endl;

//Holding the lock and then signalling the parent

pthread_mutex_lock(&m);

done = 1;

pthread_cond_signal(&c); //send the signals to the threads on the conditional variable c

pthread_mutex_unlock(&m);

return NULL;

}

int main() { std::cout << “parent begins” << std::endl; pthread_t p; pthread_create(&p, NULL, child, NULL);

//Holding a lock and then waiting on the children

pthread_mutex_lock(&m);

while (done == 0) //while the child is not sent its done message

{

pthread_cond_wait(&c, &m); //put yourself onto sleep in the conditional variable c, while releasing the mutex m

}

pthread_mutex_unlock(&m); //if woken up and the signal is done we are good

//case 1: child has not done yet; so parent puts itself into waiting state

//case 2: child is done so parent just simply returns

std::cout << "parent ends" << std::endl;

return 0;

}

-The code has a mutex vairable, a conditional variable and another done variable

- DO WE REALLY NEED THE VARIABLE DONE? Yes if not then the case 2 will be broken

- DO WE NEED THE mutex variable (does only cond variable not work)? Nope what if parent is interrupted just before its about to sleep. There is no lock so the child will enter its region thus signaling the parent already. Now when parent turns it sleeps forever

- Common tip (sometimes ignorable but not suggested): HOLD THE LOCK WHEN WAITING OR SIGNALING

- Do we need the while loop? Yes better to do cause you see those above 2 conditions could do harm so always good to have

APPARENTLY FAMOUS CONSUMER-PRODUCER PROBLEM: -imagine one or more producer threads and one or more consumer threads -producers generate data buffer and place them in a buffer; and consume them in some way -eg: grep foo file.txt | wc -l -1. grep writes lines from file.txt with the string foo in them to what it thinks is standard output, -2. the unix shells redirects the output to unit pipe -3. The other end of unix pipe is connected to the standard input of the process wc -Here, grep is producer, wc is consumer; between them is an in-kernel bounded buffer (hence bounded buffer is a shared resource)

Problem: we need to do producer-consumer problem with an integer buffer

int buffer; bool IsEmpty = true; //initially the buffer is emtpy void put(int value) { assert(IsEmpty == true); IsEmpty = false; buffer = value; } int get() { assert(IsEmpty == false); IsEmpty = true; return buffer; }

Solution 1: (since the buffer accessing part is to be locked)

void *producer(void *arg) { std::cout << “producer here” << std::endl; pthread_mutex_lock(&lock); put(10); pthread_mutex_unlock(&lock); return NULL; } void *consumer(void *arg) { std::cout << “consumer here” << std::endl; pthread_mutex_lock(&lock); int tmp = get(); std::cout << tmp << std::endl; pthread_mutex_unlock(&lock); return NULL; }

Problem: Works only when producer enters first than the consumer

Solution 2: we need to implement the condtional variables

void *producer(void *arg) { std::cout << “producer here” << std::endl; pthread_mutex_lock(&lock); if (IsEmpty false) { pthread_cond_wait(&cond, &lock); //if buffer is not empty then wait in queue } put(10); pthread_cond_signal(&cond); //after putting the value, signal the waiting consumers pthread_mutex_unlock(&lock); return NULL; } void *consumer(void *arg) { std::cout << "consumer here" << std::endl; pthread_mutex_lock(&lock); if (IsEmpty true) { pthread_cond_wait(&cond, &lock); //if the buffer is empty then wait in queue until signalled by the producer } int tmp = get(); std::cout << tmp << std::endl; pthread_cond_signal(&cond); //signal that the buffer is empty pthread_mutex_unlock(&lock); return NULL; }

Modificaiton: Lets modify a bit and make the writing loopy times and reading it forever untill possible (so we expect the output to be printed while end behaviour is consumer sleeping forever)

loops is a global variable

void *producer(void *arg) { std::cout << “producer here” << std::endl; for (int i = 0; i < loops; ++i) { pthread_mutex_lock(&lock); if (IsEmpty false) { pthread_cond_wait(&cond, &lock); } put(10); pthread_cond_signal(&cond); pthread_mutex_unlock(&lock); } return NULL; } void *consumer(void *arg) { std::cout << "consumer here" << std::endl; while(1) { pthread_mutex_lock(&lock); if (IsEmpty true) { pthread_cond_wait(&cond, &lock); } int tmp = get(); std::cout << tmp << std::endl; pthread_cond_signal(&cond); pthread_mutex_unlock(&lock); } return NULL; }

Problem: But if we have multiple threads, say one producer and two consumers -what we expect is to write the buffer 10 times by producer and read it ‘loops’ times by consumer (does not matter which one reads) -So there should be no assertion before the buffer has been written and read loops number of times -First T1 runs and acquires the lock, but since buffer is empty so it waits sleeping (which releases the lock) -Now producer walks in and does its writing once, then signals which puts the first thread from sleeping to ready; and if the consumer threads are not scheduled yet producer sleeps on next interation as the buffer is still full -Regular expection is the first thread T1 to be running and do its job -But since there are two of them say second one T2 gets running -It will then consume the value and signals the producer -But before producer gets its turn say thread1 gets its turn then it wakes up into the part of the code where it left sleeping -So it has lock and tries to read the buffer but its been already read by T2 so an assertion error -Problem is that producer woke T1 but it didnot run (waking vs running) instead run T2 which changed the state -Problem is to prevent T1 from reading the buffer when T2 has already read its NOTE: this way of giving signal ie putting from sleeping to ready is called mesa semantics -Another alternative is to let the thread run as soon as it wakes called hoare semantics (sounds better but complicated) -So virtually all use mesa

Solution 3: You while instead of if, because when the thread T1 wakes up it will again check the IsEmpty then go sleep again -NOTE to always use while loops (talking about inner while loops) with condition variables (may be not required but better to do it)

Problem: -Say the two consumer threads go first and then sleep; -Now producers turn and writes the buffer and sleeps itself, and it wakes one of the threads say T1 -Now T1 does its read and has to wake producer right? But who says? Both Producer and T2 are sleeping so it can wake up T2 as well -If it wakes up T2 then T2 seeing that buffer is emtpy goes to sleeping -As a result all of them are sleeping -ANS: signaling is needed but cautiusly ie consumers should wake up producers and vice versa

Solution 4: A working one using two conditional buffers ie consumers sleep on their own buffers but call on the producers one and vice-versa for the producers (works perfectly with the consumers sleeping at last)

GENERALIZED WITH BETTER EFFICENTY: -Increase the size of buffer, make it like a queue -For single consumer and producers; reduces context switches cost -For multiples (one or both of them) allows concurrente producing or consuming to take place

THE CODE IS AS FOLLOW: IN TOTALLY RUNNABLE FORM: WITH COMMENTS AS WELL

include

pthread_mutex_t lock = PTHREAD_MUTEX_INITIALIZER; pthread_cond_t for_producers = PTHREAD_COND_INITIALIZER; pthread_cond_t for_consumers = PTHREAD_COND_INITIALIZER; int loops = 100;

void put(int value) { assert(count < MAX); buffer[fill_ptr] = value; fill_ptr = (fill_ptr + 1)%MAX; count += 1; } int get() { assert(count != 0); int tmp = buffer[use_ptr]; use_ptr = (use_ptr + 1)%MAX; count -= 1; return tmp; } void producer(void arg) { for (int i = 0; i < loops; ++i) { std::cout << “producer has acquired the lock” << std::endl; pthread_mutex_lock(&lock); while (count == MAX) { std::cout << “producer sleeping” << std::endl; pthread_cond_wait(&for_producers, &lock); //if buffer is not empty then wait in queue std::cout << “producer woke up” << std::endl; } std::cout << “producer wrote the value ” << i << std::endl; put(i); std::cout << “producer signaled the consumers” << std::endl; pthread_cond_signal(&for_consumers); //after putting the value, signal the waiting consumers std::cout << “producer has released the lock” << std::endl; pthread_mutex_unlock(&lock); } std::cout << “producers loop finished ” << std::endl; return NULL; } void consumer(void arg) { while(1) { std::cout << “consumer ” << (char)arg << ” acquired the lock” << std::endl; pthread_mutex_lock(&lock); while (count == 0) { std::cout << “consumer ” << (char)arg << ” sleeping” << std::endl; pthread_cond_wait(&for_consumers, &lock); //if the buffer is empty then wait in queue until signalled by the producer std::cout << “consumer ” << (char)arg << ” woke up” << std::endl; } std::cout << “consumer’s ” << (char)arg << ” read the value: ”; int tmp = get(); std::cout << std::endl; std::cout << tmp << std::endl; std::cout << “consumer ” << (char*)arg << ” signalled the producers” << std::endl; pthread_cond_signal(&for_producers); //signal that the buffer is empty pthread_mutex_unlock(&lock); } std::cout << “consumer ” << (char*)arg << “loop finished” << std::endl; return NULL; } int main() { pthread_t producer_t, consumer1_t, consumer2_t; char arg1[] = “T1”; char arg2[] = “T2”; pthread_create(&producer_t, NULL, producer, NULL); pthread_create(&consumer1_t, NULL, consumer, arg1); pthread_create(&consumer2_t, NULL, consumer, arg2);

pthread_join(producer_t, NULL);

pthread_join(consumer1_t, NULL);

pthread_join(consumer2_t, NULL);

return 0;

}

ESSENTIALLY: producer: //lock → cond wait (on producer queue) → get → cond signal (on consumer queue) → unlock consumer: //lock → cond wait (on consumer queue) → get → cond signal (on producer queue) → unlock

SEMAPHORES: (to implement both locks and CVs) -Semaphore is an object with non integer value -is initialized as sem_init(&semaphore_var, 0, 1); 1 is the initial value while 0 is for sharing variable between threads of same process, for sharing inter-process is different -interacted by two functions, sem_wait() and sem_post() -sem_wait(sem variable): decrement the value of semaphore by 1 and wait if value is negative case1: returns immediately case2: sleepens the caller -sem_post(sem var): increment the value by 1 and if there are one or more threads waiting, wake one nobody waits here sleeping -NOTE: If a semaphore, when negative is equal to the number of waiting threads. -Dont worry (yet) about the possbile race, assume that the factions they make are performed atomically

As a locking mechanism (as like locks) sem_t m; sem_init(&m, 0, X); the value of x is 1 for locks

sem_wait(&m);

//critical section here

sem_post(&m);

-Initially if thread 1 enters wait(), it decreases the value to 0 and moves into critical section

-while its in critical section, another tries to attemp the lock it decreases to -1 and sleeps waiting

-let any number of thread try the lock so semaphore value is -1, -2, -3 or whatever

-when thread1 completes it icnreaes the value by 1 and wakes one of them

-The woken up runs the critical section and as it leaves increases the value by 1

-In this way the number of waiting threads equal the neative value of the semaphore

As a ordering mechanism (as like CVs) child (): sem_post() //signal herer

main():

create thread

sem_wait() //wait here for child

-The sem variable must be initialized to zero here

Producer and consumer problem: -the idea is the earlier with the locks and CVs implemented by the semaphore equivalents

IMPLEMENTATION:

include

//buffer related paramters const int MAX = 10; int buffer[MAX]; int put_ptr = 0; int get_ptr = 0; int count = 0; int loops = 10; //semaphore related paramters sem_t lock; sem_t for_producers; sem_t for_consumers;

void put(int value)

{

assert(count < MAX);

buffer[put_ptr] = value;

put_ptr = (put_ptr+1)%MAX;

count += 1;

}

int get() { assert(count != 0); int temp = buffer[get_ptr]; get_ptr = (get_ptr+1)%MAX; count -= 1; return temp; }

void* producer (void* arg)

{

//lock → cond wait (consumer queue) → get → cond signal (producer queue) → unlock

for (int i = 0; i < loops; ++i)

{

std::cout << “producer: waiting for lock” << std::endl;

sem_wait(&lock);

std::cout << “producer: acquired lock” << std::endl;

sem_wait(&for_producers);

std::cout << "producer: puts the value " << i << std::endl;

put(i);

std::cout << "producer: signals the consumers" << std::endl;

sem_post(&for_consumers);

std::cout << "producer: releases the lock" << std::endl;

sem_post(&lock);

}

std::cout << "PRODUCER: LOOPS FINISHED" << std::endl;

return NULL;

}

void* consumer (void* arg)

{

//lock → cond wait (producer queue) → get → cond signal (consumer queue) → unlock

while(1)

{

std::cout << “consumer: waiting for lock” << std::endl;

sem_wait(&lock);

std::cout << “consumer: acquired lock” << std::endl;

sem_wait(&for_consumers);

std::cout << "consumer: acuired value" << std::endl;

int tmp = get();

std::cout << tmp << std::endl;

std::cout << "consumer: signaling the producers" << std::endl;

sem_post(&for_producers);

std::cout << "consumer: releasing the lock" << std::endl;

sem_post(&lock);

}

}

int main() { sem_init(&lock, 0, 1); sem_init(&for_producers, 0, MAX); sem_init(&for_consumers, 0, 0); pthread_t consumer1, producer1; pthread_create(&consumer1, NULL, consumer, NULL); pthread_create(&producer1, NULL, producer, NULL);

pthread_join(consumer1, NULL);

pthread_join(producer1, NULL);

return 0;

}

PROBLEM: The problem in this impelmentaiton is that the waiting in mutex earlier used to hold the lock (we passed the lock) but here the lock is held by the thread when it goes into sleeping (dead lock situation). Following scenario:

-consumer is waiting for data from producer

-producer is waiting for lock from consumer

The correct way to do is to reduce the scope of the lock (they say this) ie take the locks inside while waiters outside. A common pattern they say

THE DINING PHILOSOHPERS (common problem posed and solved by djisktra) -Five philosohpers with five forks in between: make most use of concurrency making sure that no philosopher gets starved

-Solution:

while (1)

think()

getforks()

eat()

putforks()

-This while loop applies to each philosohper separately, they may be at any position inside the loop

To solve:

-Need five semaphores each for forks forks[5] each initialized to 1 whose idea is to lock the forks using semaphores as locks

Solution1: getforks(): sem_wait(forks[left(p)] sem_wait(forks[right(p)]

putforks():

sem_post(forks[left(p)]

sem_post(forks[right(p)]

;left and right function gives the forks on left and right of the philosopher p

Problem: Easy to spot, one philosopher may pick fork on its left while the one on its right is picked by another (another ko left thiyo tyo)

as a result they starvie each other

Even if the condition is that if they do not get the next fork they leave the fork then such situation may repeat again, so there is a little chance that they may starve forever

Solution2: (that apparently works; i have been able to see it only when the condition is loosened) getforks(): if (p == 4) sem_wait(forks[right(p)] sem_wait(forks[left(p)] else sem_wait(forks[right(p)] sem_wait(forks[left(p)]

COMMON BUGS: 1. atomiticty-violation: desired serializability among multiple memory accesses is violated (eklot ma execute huna parni implement garda bhayena, bich ma interrput bhayo bhane tension) 2. ordering: desired order between two memory accesses is flipped 3. dead-locks: Thread1: mutex_lock(L1) mutex_lock(L2) Thread2: mutex_locK(L2) mutex_lock(L1)

Condition:

-Mutual exclusion: threads claim exclusive control of resources that they require, thread grabs a lock

-Hold-and-wait: threads hold resources allocated to them (like locks) while waiting for additional resources (locks that they wish to acqurie)

-No preemption: resources (eg. locks) cannot be forcibly removed from threads that are holding them

-Circular wait: there is a circular chain of threads such that each holds one or more resources that are being requested by the next thread in the chian

NEPALI: kei kura haru (2 bhanda badi) chahanu,

ti madhye euta chaheko kura payesi sabai napayesamma teslai naxodnu,

kasaile xudaunani nasaknu,

yesetai aru jhaatu haru bhayo ra tiniharu le chaheko kura overlap huna gayo bhane deadlock chance

Prevention: Play on the above points

Avoidance: Say there are two locks and four threads, their requirement of locks is as follow:

T1 T2 T3 T4

L1 yes yes no no

L2 yes yes yes no

Here T3 and T4 are not lovi (tini haru le kei kura chahannnan) so tiniharu lai aru sanga over lap garna painxa chill parama

Hence, if there is contentation for resources, if we want to avoid deadlock need to give up performance (rarely used)

Detect and recover: if os finds deadlock then recover by exiting them or something

NOTE: Threads are not the only way to write concurrent applications, there are some other styles so called event based not getting into it

PERSISTENCE: A canonical picture of an IO device: Registers -Status: can be read to see the current status of the device -Command: to tell the device to perform certain task -Data: to pass data to the device or get data from the device

Polling based:

while (status == busy)

; // wait until device is not busy

write data to the data register

write command to comman register

while (status == busy)

; // wait until device is done with your request

Interrupt based:

-OS issues a request, put the calling process to sleep

-and context switch to another task

-When the device is finally finished with operation, it will raise a hardware interrupt

-CPU jumps to ISR, which finishes the request

-Wake the process waiting for IO

NOTE: Polling good for fast devices, interrupt for slow, if speed not known then hybrid (poll for few cycles then wait for interrupt)

Another problem with canonical protocol: (to transfer from memory to disk)

-copy from memory to cpu then to disk's register

-initiate the i/o command

Solution: The copying from memory to disk is done by a special device called DMA

-OS tells dma to transfer from this memory location to disk

-DMA interrupts cpu to tell that its done

HOW ACTUALLY CPU COMMUNICATES WITH DEVICES REGISTERS? ANS: IO or memory mapped

OKAY TOO MANY DEVICES AND WEIRD INTERFACES: Ans: The OS would know how each interface works but provide an abstraction for the user so user has API’s like open, read, write etc. which are connected to generic block interface (block read/write) below which are the devices The abstraction happens as file system or raw interface

…

Two types of abstraction Files: as a linear array of bytes (specific details does not matter to OS whether a text of image), has an inode number associated with it Directory: also has an inode number, contains a list of user-readable&lowlevel name pairs, each entry is either a file or directory

API’s: -open(), read(), write() and close(); the c-functions printf() and all make use of these API’s

CONCURRENCY STUFFS NOW (in C++ specially)

Concurrency in C++: (read a decent OS book beforehand)

Programming languages offer concurrency but it could be actual hardware one or just task switching -Why would you write concurrent if not for parallelism, you ask? Ans: Because separating concerns is good and easy sometimes (illusion of responsiveness)

- Divide the program into multiple sepearate single threaded processes that are run at the same time -slow as involves lots of context switching -easy and safe to write than threads -can run processes on separate machines (with long communication cost)

- A single process with multiple threads -shared space and lack of protection makes threads overhead less -quite involved to write -NOTE that threads do have multiple stacks of their own

NOTE: Many programming languagues themselves offer some stuffs to do multithreaded programming (ofc they would use the OS API) while multi process program have to refer to OS API’s directly

WHEN NOT TO DO Concurrency? -Dont make it hard to understand and maintain buggy -If multiple threads then costs like memory and shit could overtake when adding the last thread

-

To initiate a thread: std::thread name(callable_object); the callable object could be a function or an object with () operator defined

Segway: std::thread my_thread(task()): task() is something temporary that is supposed to return a callable object but instead the statement declares ‘my_thread’function that takes in function (or rather pointer to it) that returns the same callable object (note i got confused once, that task() has no name of the function its like func_name(int) see the expected integer has no name)

To avoid such you one extra bracket or use curly brackets instead

OR lambda expressions (are such callable objects that avoid this) -defines a self-contained function that takes no paramters and relies only on global variables and funcitions (not even a return value) -[]{//body}(); calls immediately (but its not what it meant for) -usually passed as callable objects to other functions or whatever man -if need parameters and return value then: (such lambda expression are to be passed only not called as above) -[](int paramters){//body if you want to return then here only}

-something called lambda functions that reference local variables (LATER) -

Once you created a thread, you either join it i.e. wait for its execution or detach it (if you do nothing before the object is destroyed then its undefined ie if thread finishes early then cool but if not then calls terminate) -Detached threads run truly in the backgrounds so they are no longer joinable)

-

Pass paramters to function that starting point of threads: std::thread name(func-name, paramter, paramter …) -The arguments are copied into internal storage where they can be accessed by the newly created thread of execution and then passed to the callble object -Means that the parameters are copied to the stack of the newly created thread so if passed pointers could be the issue of dangling pointers if the main program already exited -If you want to pass by reference, then need to use std::ref or by good old pointer >Share memory using pointers: poor way of doing things >Share value: overhead >Instead use std::move that moves the object

Fact: Now if not joined then even if main exits then the thread could be running in some function somwether in lala land

-

Transferring ownership of a thread -A std::unique_ptr is a type of class that points to object (so has its own resource) then when it no longer points to the object, either assigned or pointer destroyed itslef then the object is also destroyed -So to transfer the ownership of such objects, special functions are provided -threads are also same in semantics, they own (are responsible for managing) a thread of execution -The ownership can be transferred between instances of std::thread so only one object is associated with a particular thread of execution

-Eg: std::thread t1(some_func1); t1 ← some_func1 thread std::thread t2 = std::move(t1); t1 ← none, t2 ← some func1 thread t1 = std::thread(some_func2) t1 ← some fun2 thread, t2 ← some func1 thread t1 = std::move(t2) tries to destroy the thread of execution associated with 1

Hence, could terminate why? cause before a thread of execution is destroyed it must be completed or detachedAppendinx: unique_ptr: (is a template) when goes out of scope, calls delete ; cannot copy unique pointer, because if one of them dies they take memory with them eg: std::unique_ptr<Class_name> name(new Class_name()); eg: std::unique_ptr<Class_name> name = std::make_unique<Class_name>(); [apparently safer] -cant copy it, cant share it shared_ptr: does somthing under the hood and allows sharing, only deletes the actual object when the reference count goes to zero

-

Real threading: std::hardware_concurrency() gives the actual hardware parallel threads that can be run. If oversubcriptoions then context switching could be takeover -so choose number of threads at runtime

SHARING THE DATA: Principle: -If all shared data is read-only, theres no problem, because the data read by one thread is unaffected by whether or not another thread is reading the same data -But if data shared between threads, and one or more threads start modifying the data, theres a lot of potential for trouble -A race condtion is where outcome depends on the relative ordering of execution of operations on two or more threads -A race is not necessarily a problem but only a problem when invariatns are broken

1. You see the possibility of race: (one thread changes the link of linked list while another does another stuff, changing the link is a multi step operation so one thread might come in between those operations)

2. You dont: two threads incrementing the counter data structure (seems like a single operation but is not)

[Fundamentally are the same stuffs: This piece is written by me only]

Solutions of such races: i. Make that operations be atomic ie they are either not started or completed, no in between. ii. Write operations of data structures such that each micro instruction preserve invariance (even if interrupted in between does not matter) [i is called locked, ii is called lock free] iii. Yet another new way is called software transactional memory where the operations are logged as transaction and conducted atomically

-

Mutex-based protection: -To lock the operations i.e. whenever one thread is on execution of that operation others cannot insert into it -std::mutex lock with member functions lock() and unlock() but pretty weird to call everytime -instead std::lock_guard template which locks the supplied mutex on construction and unlocks it on destruction, ensuring a locked mutex is always correctly unlocked -But still need to create the mutex variable -PROGRAM lock_using_mutex.cpp -can do template argument deduction by simply omitting the passed argument to the lock_guard template

Appendix: mutex variable lock_guard variable (template): locks when created, unlocks when destroyed (cannot unlock in between) unique_lock variable (template): can do inbetween unlocking but is safer or something; and flexible rey euta le bhaneko scoped_lock variable: superior of lock_guard which locks arbitrary number of mutexes at once (like std::lock upcoming)

Problem: If someone gets access to reference or pointer to the protected data strcuture, the locking mechanism is for nothing Solution: Dont pass pointers and references to protected data outside the scope of the lock, whether by returning them from a function

Problem: Granular locking schmemes (multiple locks in operations): interface problem; Solution: Careful programming Coarse types: decrease in advantages of concurrency

Problem: If you end up having to two or more mutexes for a given operation there’s another problem: deadlock -Quite opposite to race condition: rather than two threads racing to be first, each one is waiting for the other, so niether makes the progress

Solution; -Always lock the two mutexes in the same order (you have two member functions and two locks, you do different order: nobody would practically do it but okay) -Somehow use scoped_lock_guard function thats like lock_guard but takes in multiple mutexes and locks them together; Locks are not the only resources that cause deadlocks, there are others as well (LATER bebs) -

Conditional variables and synchronization: -We need to make thread wait until another thread meets a conditon (does not mean that the thread gets to do its stuff exactly when the condition is met, its after the conditin is met) -Put a protected flag; one thread polls it another updates it (wastage of time) so here is where CVs come, instead of polling we put it on a queue -instead of polling we wait using the conditional variable and signal afterwards, while the receiving thread is waiting -For some reason, the cond.wait(uniquelock mutex); the unique pointer is like guard lock template but some random reason google it up

-

One-off events, waiting for plane announcements (each time different plane)

-Common one-off event is the result of a calculation that has been run in the background. std::thread does not have easy means of getting the alue -Use std::sync to start an asynchronous task for which you dotn need the result right away. -Rather than giving a thread object to wait on, std::sync returns a std::future object which will eventually hold the return value of the function -When you need value you could call get() on the future, and the thread blocks until the future is ready and returns the value -Can pass parameter just like in std::thread

>Actually its upto implementation to whether to create a thread or do synchrnous execution when get() is called >To be explicit, there is first parameter to send, std::launch::deferred (to do synchronous) or std::lauch::async (to make a thread); or can do OR of them which is the deafult-Also can send paramters to the function -The function can expect a future object -then inside the body do future_object.get() -in main body, make a promise object do promise_obj.get_future() and now pass this future using std::ref(future_obj) -While sometime later can do, promise_obj.set_value(actual value) -note these are all templates

NOTE each future object can be done get()‘d only once

Additional stuffs like packaged tasks and shtits

-

C++ also supports atomic types like atomic int and like that for implementing lockfree data structures -For eg: make an atomic counter then we could do counter.fetch_add(value_to_add, )

FACTORS TO CONSIDER WHEN WRITING CONCURRENT ALGORS: >No of hardware concurrency available, subject to other appliction running at the same time >contentation: If threads are to modify the data, they do so on their individual caches, which if another core requires the same data, must be propagated to another cache, thats huge amount of time -atomic types possibility exists -using locks also has such possiblity >false sharing: if dadta owned by threads are closer then still possibility of ping poing -Every time the thread accesssing entry 0 needs to update the value, ownership of the cache line needs to be transferrred to processor running that thread -only to be transferred to the cache for the processor running the thread for entry 1 when that thread needs to update its data itme -std::hardware_destructive_interference_size tells you the size that aoids false sharing. if you have an array where each consecutive are shared by threads then need to align each of them by that value >proximity of data: you know you need to have your data in closely so can be brought together in cache. it causes problem in multithreading as well because of task switching, not going into detail. For this the data used by the thread better fit std::constructive

GUIDELINESS: -Try to adjust the data distribution between threads so that data that’s close together is worked on by the same thread -Try to minimize the data required by any given thread so it fits into one cache line using constructive_interference as guide -Try to ensure that data accessed by separate threads is sufficinety far apart to avoid false sharing using destructive_interference as guide

LINUX PROGRAMMING INTERFACE #####################################################################################################

-

Shell is a special purpose program designed to read commands and execute appropaite commands

- Bourne shell, C shell, Korn shell, Bourne again shell and so on

-

Single directory hierarcy to structure all files in the system.

File types:

-

Normal data files

-

Directories: special file whose contents take form of a table of filenames coupled with references to the corresponding files Links: filename-plus-reference

May contain link to files and directories Every contains at least two links . (self) and .. (parent)

-

Specially marked files: forms symbolic links

-

Others:

Pathname: location of file within the hierarchy (relative and absolute)

-

COMMAND: cd

-

Maintains users and their groups to access system resources,

- Users:

- Groups:

- Superuser:

Each file has an associated UID and GID that define the owner of the files.

-

Everything is a file. -I/O operations will work on any types that appropriates as a file -Files are referred by file descriptor (non-negative integer) -Three default open files, standard input, standard output, standard error

-

Processes: -Programs are basically series of statements written in a programming langauge. -When a machine program is launched: >Kernel loads the code of a program into virtual memory >Sets up kernel bookkeeping data structures to record various informations -Processes can request the kernel to perform various tasks using kernel entry points (kernel to user mode)

-

Filter programs: -Programs that reads its input from a stream (interface for IO), performs some transformation of that input, and generates another stream

COMMAND: echo

-Piping:

Filters may be strung together into a pipeline with the pipe operator ("|")

-Redirection:

Input (<), Output (>), Appends (>>)

6) Memory layout: -A process is logically divided into the following segments: > Text: > Data: > Heap: > Stack:

-

Process creation: -A parent process can create a new child process using fork() system call and inherits copies of parent’s data, stack and heap segments, which it may modify, while text segment marked read-only is shared by the two processes -Can use execve() system call to load and execute an entirely new program by destroying old segments, replacing them with new ones -Can terminate in one of two ways: >Request its own termination using exit() system call >Killed by the delivery of a signal >Returns a termination status, to the parent for inspection using the wait() system call.

-

Process credentials: 0. Unique identifiers PID and PPID

- Real UID and real GID:

- Effective UID and effective GID:

Priviliged process is one whose effective UID is 0. Becomes privilege: -Because it was created by another priviliged process -Via the set-user-ID mechanism, which allows a process to assume EID that is same as the UID of the program file that it is executing

- Environment variables:

Created with export MYVAR = “0” for shells

COMMAND: env

-

The init process: -Special process (parent of all) which is derived from the program file /sbin/init -PID = 1

A daemon is a special process that is long-lived and runs in the background and has no controlling terminal

-

Static and shared libraries: -Static: -Shared:

-

Interprocess communications: …

-

The /proc files: -Provides an interface to kernel data structures in a form that looks like files and directories on a file system

/proc/

~ /proc/self /proc/cpuinfo /proc/meminfo

FILES: /proc

- System calls: -Makes a system call by invoking a wrapper function in the C library (glibc for UNIX) -When a system call fails, it sets the global integer variable errno (initialize 0 first) to a positive value that defines the specific error -char* strerror(errno) returns a pointer to error string corresponding to errno

…

- File calls:

-fd = open(

, , <mode*>) -numread = read(fd, , ) -numwritten = write(fd, , ) -status = close(fd) -curr = lseek(fd, , )

SYSTEM: open() SYSTEM: read() SYSTEM: write() SYSTEM: close() SYSTEM: lseek()

>File holes:

-Space between previous end of file and the newly written bytes at an arbitrary point past the end of file

2) Buffer cache: -When working with disk files, read() and write() system calls dont directly initiate disk access, they simply copy data between a user-space buffer and a buffer in the kernel buffer cache >Reduces the number of disk transfers that the kernel must perform -Although much faster than disk operations, system calls take an appreciable amount of time which is minimized by buffering data in large blocks and thus performing fewer system calls (usually 4096 bytes)

-

stdio.h wrappers: -FILE *fopen(const char *

, const char * ) -int fclose(FILE *stream) -void setbuffer(FILE *stream, char *static_buf, size_t size) -int fflush(FILE *stream) -int fsync(int fd); fd = fileno(FILE *stream) - → stdio calls → → io syscalls → → disk write → -Not filling : fflush -Not filling : fsync -Not using at all: open flags -

Bypass the buffer cache: -Intended for specialized IO requirements, O_DIRECT open flags -Not necessarliy fast IO performance as misses various kernel optimizations

-

Device files: -Special file corresponds to a device on the system -Within the kernel, each device type has a corresponding device driver, which implements a set of operations that correspond to input and output actions on an associated piece of hardware -API provided by the device drivers is consistent, and includes operations corresponding to the system calls open(), close(), read(), write() and so on -Some devices are real, while others are virtual, meaning that there is no corresponding hardware; rather, kernel provides (via device driver) an abstract device with an API that is the same as a real device -Device files appear within the file system, just like other files, usually under the /dev directory -/sys directory allows the viewing of the device information and details

FILES: /dev FILES: /sys

-

Disk device files: -Each disk is divided into one or more nonoverlapping partitions, each treated by the kernel as a separate device residing under the /dev directory -A disk device may hold any type of information, usually contains: >a file system holding regular files and directories >a data area accessed as a raw-mode device >a swap area used by the kernel for memory management

-

Disk file systems: -Organized collection of regular files and directories -A disk partition := | boot-block | super-block | i-node table | data blocks | >Boot block is not used by the file syste, it contains information used to boot the operating system >Superblock contains parameter about the file system >Each file or directory in the file system has a unique entry in the i-node table >Majority of space in a file system is used for the blocks of data that from the files and directories residing in the file system

COMMAND: ls -li

-

I-nodes in ext2: -Each i-node contains 15 pointers, first 12 of these point to the locaiton in the file system of the first 12 blocks of the file -The next pointer is a pointer to a block of pointers that give the locations of the thirteenth and subsequent data blocks of the file -The number of pointers depends on the block size of the file system -Forteenth pointer is a double indirect pointer, which points to blocks of pointers that in turn point to blocks of pointers that in turn point to data blocks of the file -Further level of indirector: the last pointer in the i-node is a triple indirect pointer

-

Single directory hierarchy: -All files from all file systems reside under a single directory tree / -Other file systems are mounted under the root directory and appear as subtrees within the overall hierarchy

COMMAND: mount

COMMAND: mount

- Virtual file systems: -VFS defines a generic interface for file-system operations, each file system provides an implementation for the VFS interface -Linux supports notion of virtual file system that reside in memory and uses swap -One use is for the glibc implementation of POSIX shared memory and POSIX semaphores

…

- Process IDs: -Each program file includes meta describing the format of the executable file (COFF or ELF) -pid = getpid() -ppid = getppid() -If a child process becomes orphaned because its parent terminates, then its adopted by the init process

SYSTEM: getpid() SYSTEM: getppid()

-

Process memory: -Text segment: -Initialized data segment: -Uninitialized data segment: -Stack: -Heap: -The memory allocations depends on ABI (API for compiled codes) so once compiled for a particular ABI, an executable should be able to run on any system presenting the same ABI. -In contrast, standardized API guarantees portability only for applications compiled from source code. -

-

Virtual memory: -Splits memory used by each program into small, fixed-size units called pages -RAM is divided into a series of page frames of the same size, at any time, only some of the pages of a program need to be resident in physical memory page frames -Copies of the unused pages of a program are maintained in the swap area reserved to supplement computer’s RAM and loaded into memory only as required -When a process refernces a page that is not currently resident in physical memory, a page fault occurs, at which the kernel suspends execution of the process while the page is loaded from disk into memory -To support, the kernel maintains a page table for each process which describes the location of each page in the process’s virtual address space which indicates location of a virtual page in RAM or indicates that it currently resides on disk though, not all address ranges in the process’s virtual address space require page-table entries, -A process’s range of valid virtual addresses can change over its lifetime, as the kernel allocates and deallocates pages (and page-table entries) for the process in the following circumstances >As stack grows download beyond limits previous reached >When memory is allocated or deallocated on the heap, by raising the program break using brk() or sbrk() >When memory mappings are created using mmap() and unmapped using munmap()

-

Stack frames: -Kernel-stack is a per-process memory region maintained in kernel memory used as the stack for execution of the functions internally during the execution of a system call -User-stack frame contains: >Function arguments and local variables: >Call linkage information: Each time one function calls another, copy of callee-saved registers (ABI dependent) are saved on called function’s stack frame to restore when the function returns -Initializer startups () → main() → next() so on

-

Command line arguments: -int main(int argc, char *argv[], char *envp[]) -Non portable methods of accessing cl arguments: >/proc/PID/cmdline -argv and environ arrays, as well as the strings they initially point to, reside in a single contiguous area of memory just above the process stack

-

Memory allocation: -Program breaks: >&etext: end of text segment >&edata: end of initialized data segment >&end: end of uninitialilzed data segment -Allocate on heap: >success = int brk(void *end_data_segment); >previous_break = void *sbrk(intptr_t increment); -After the program break is increased, the program may access any address in the newly created area, physical memory pages are automatically allocated on the first attempt by the process to access addresses in those pages

SYSTEM: brk() SYSTEM: sbrk()

-Since virtual memory is allocated in units of pages, end_data_segment is effectively rounded upto the next page boundary

-Wrapup functions malloc() and free():

>Maintains a (doubly linked) list of free memory blocks with extra bytes to hold an integer containing the size of the block

>malloc() scans the free list in order to find one whose size is larger than or equal to its requirements

>If the block is exactly the right size, it is returned to the caller, if it is larger, then it is split so that a block of the correct size is returned to the caller and a smaller free block is left on the free list

>If no block on the free list is large enough, then calls sbrk() to allocate more memory (increases the program break in larger units) putting the excess memory onto the free list

>free() places a block of memory of size maintained on allocation onto the free list

WRAPPER: malloc() WRAPPER: free()

-

Process creation: -Dividing taks up makes application design simpler and permits greater concurrency -child_pid (parent) = fork(); 0 (child) = fork() -Two processes execute the same program text, but have separate copies of the stack, data and heap segments -Child can get its own PID using get_pid() and the ID of its parent using getppid() -After a fork, it is indeterminate which of the two processes is next scheduled to use the CPU

-

File sharing between parent and child: -When fork() is performed, the child receives duplicates of all of the parent’s file descriptors, consequently the file attributes of an open file are shared between the parent and child

-

Memory semantics of fork(): -To avoid wasteful copying when fork() is followed by an exec(), the kernel sets things up so that page-table entries for the data, heap and stack segments refer to the same physical memory pages as the corresponding page-table entries in the parent, and pages are marked read-only, after which kernel traps any attempts by either the parent or the child to modify one of these pages, and makes a duplicate copy of the about-to-be-modified page

-

Race conditions: -Cant assume a particular order of execution for the parent and child after a fork(), to guarantee a particular order, need some kind of synchronization techniques

-

Terminating a process: -For normal void exit(int status); -Falling off the end of main program is equivalent to exit(0)

-

Monitoring child process: -Waits for one of the children of the calling process to terminate and returns the termination status of that child: terminated_child = wait(int *status) >If no previously unwaited for child of the calling process has yet terminated, the call blocks until one of the children terminates >If a child has already terminated by the time of the call, wait() returns immediately >If a process has created multiple children, it is not possible to wait() for the completion of a specific child, we can only wait for the next child that terminates -pid_t waitpid(pid_t pid, int *status, int options); options := 0

-

Orphans and zombies: -Orphaned child is adopted by init, the ancestor of all processes, whose process ID is 1 -To the child that terminates before its parent has had a chance to perform a wait(), although the child has finished its work, the parent should still be permitted to perform a wait() at some later time to determine how the child terminated, resources held by the child are released back to the system to be reused by other processes, the only part that remains is an entry in the kernel’s process table recording usage statistics -When the parent does perform a wait(), the kernel removes the zombie, since the last remaining information about the child is no longer required -If the parent terminates without doing a wait(), the init process adopts the child and automatically performs a wait(), thus removing the zombie process from the system

-

Program exec: -When execve() system call loads a new program into process’ memory, after executing various C library run-time startup code and program intialization code (gcc constructor attritubes), the new program commences execution at its main() function -int execv (const char *pathname, char *const argv[]) -int execvp (const char *filename, char *const argv[])

Exams:

- OS and abstractions

-definition as a serive and as a layer

-two: abstract set of resources instead of messy hardware and managing the hardware resources

- why hardware hard? how OS solves it? example of SATA

- orderly and controlled allocation: process, memory and file management, security ##################################################################################################### TABENHAUM #####################################################################################################

Operating system: -OS is system software that manages computer hardware and software, and proides common services for computer programs -A modern computer consists of one or more processors, some main memory, disks, printers, a keyboard, a mouse, a display, network interfaces, and various other input/output devices, to manage and use them optimally, computers are equipped with a layer of software called the operating system -Two essentially unrelated functions: providing application programmers a clean abstract set of resources instead of the messy hardware ones and managing these hardware resources

As an Extended Machine:

-Real processors, memories, disks and other devices are very complicated and present difficult and inconsistent interfaces to the people who have to write software to use them, sometimes this is due to the need for backward compatibility with older hardware, other times it is a attempt to save money

-One of the major tasks of the operating system is to hide the hardware and present programmers with clean and elegant abstractions to with with instead

-Consider modern SATA (Serial ATA) hard disks used on most omputers, a book describing early version of the interface to the disk ran over 450 pages, no sane programer would want to deal with this disk at the hardware level, instead, a piece of software, called a disk driver, deals with the hardware and provides an interface to read and write disk blocks, without getting into the details, OS contain many drivers for controlling IO devices

As a Resource Manager:

-The job of the OS is to provide for an orderly and controlled allocation of the processors, memories and IO devices among the varous programs wanting them

1. Process Management:

-The OS decides the order in which processes have access to the processor, and how much processing time each process has in a multi-programming environment

-Includes multiplexing of CPUs in time, different programs or uses take turns using it, with only one CPU and multiple programs that want to run it, the OS first allocates the CPU to one program, then, after it has run long enough, another program gets to use the CPU and then other, and eventually the first one again

2. Memory Management:

-For memory management, the OS keeps track of which user program can use which bytes of memory, memory addresses that have already been assigned, as well as memory addresses yet to be used

-Other multiplexing is space multiplexing where main memory is normally divded up among several running programs, if there is enough memory to hold multple programs, it is more efficient to hold several programs at once rather than give one of them all of it

3. File Management:

-The file management tasks performed by an operating system are: it keeps track of where data is kept, user access settings, and the state of each file, among other things

-In many disk system a single disk can hold files from many users at the same time

4. Security:

-To safeguard user data, the operating system employs password protection and other related measures, also protects programs and user data from illegal access

-OS provides antivirus protection against malicious attacks across networks and has inbuilt firewall which acts as a filter to check the type of traffic entering into the system

Evolution:

-

Batch systems: -To run a job, a programmer would first write the program on paper (usually assembly), and punch it on cards, then bring the card deck down to the input room and hand it to one of the operators. Idea was to collect a tray full of jobs in the input room and then read them onto a magnetic tape using a realtively inexpensive computer, which was quite good at reading cards, copying tapes, and printing output, but not at all good at numerical calculations, after an hour of collection a batch of jobs, the tape was carried into the machine room, a special program (ancestor of todays’ OS), read the first job from tape and ran it, output was written on a second tape, instead of printed, after each job finished, OS automatically read the next job from the tape and began running it, when the whole batch was done, the operator removed the input and output tapes, replaced the input tape with the next batch, and brought the output tape for printing offline

-

Multiprogramming: -With advent of IC based computers, original intention was that all software, including the OS had to work on small systems with few peripherals as well as large systems for doing weather forecasting and heavy computing -Result was an enormous and extraordinarily complex OS, of which most important feature was multiprogramming -When the current job paused to wait for a tape or other IO operation to complete, the CPU simply sat idle until the IO finished, solution evolved was to partition memory into several pieces, with a different job in each partition, while one job was waiting for IO to complete, another job could be using the CPU -If enough jobs could be held in main memory at once, the CPU could be kept busy nearly 100% of the time -Having multiple jobs safely in memory at once requires special hardware to protect each job against snooping and mischief by the other ones, but the 360 and other third-generation systems were equipped with this hardware -Another major feature present in third-generation OS was ability to read jobs from cards onto the disk as soon as they were brought to the computer room, then, whenever a running job finished, the OS could load a new job from the disk into the now-empty partition and run it, the technique is called spooling

-

Time sharing: -With third-generation systems, the time between submitting a job and getting back the output was often several hours, so a single misplaced comma could cause a compilation to fail, and the programmer to waste half a day -Paved the way for timesharing, a variant of multiprogramming, in which each user has an online terminal -In a timesharing system, if 20 users are logged in and 17 of them are thinking or talking, the CPU can be allocated in turn to the three jobs that want service -Since people debuggin programs usually issue short commands, rather than long ones, the computer can provide fast, interactive service to a number of users and perhaps also work on big batch jobs in the background when the CPU is otherwise idle -CTSS (Compatible Time Sharing System), developed at MIT was the first general-purpose timesharing system, after whose success, Bell Labs and MIT envision a system providing computing power for everyone in the Boston area, known as MULTICS (Multiplexed Informatio and Computing Service) -Despite its lack of commerical success, MULTICS had a huge influence on subsequent operating systems especially UNIX and its derivatives -The concept of computer utility come back in the form of cloud computing, in which relatively small computers are connected to servers in vast and distant data centers

-

Interactive: (not) -Allows user to interact with the computer through a graphical user interface (GUI) or command line interface (CLI) -Multiple applications to run simultaneously, enabling users to switch between applications and perform multiple tasks at the same time, OS manages system resources to ensure that each application gets the resources it needs to function properly. -Apple Macintosh was a huge success as it as user friendly, meaning that it was intended for users who not only knew nothing about computers but furthermreo had absolutely no intention whatsoever of learning

-

Real time: (not) -With the advent of IC’s in the form of embedded sstems, real time processing arose across various industries, including aerospace, defense, and industrial automation -Real time processing is a computer system’s ability to process data and respond to events in real-time, or in other words, within a guaranteed time frame, typically measured in milliseconds or microseconds, to ensure that the system meets its timing rqeuirements -One of the eariest RTOS was Executive for Microprogramming Systems (RTOS/MP_ by Digital Equipment Corporation (DEC), which was used in various real-time systems, including the Apollo Guidance computer in the Apollo missions to the moon -Applications include automotive systems, medical devices, and consumer electroincs

OS Structure: -Since the OS is such a complex system, it is created with utmost care to preserve maintainability

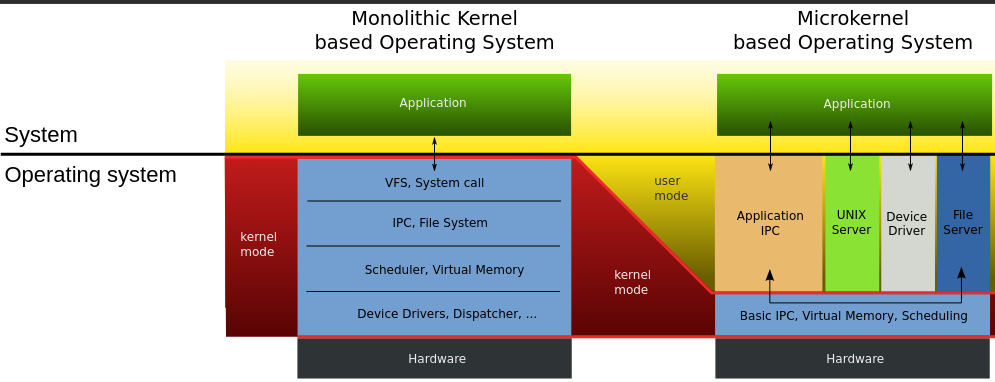

1) Monolithic structure: (figure)

-In the monolithic approach, the entire OS runs as a single program in the kernel mode, written as a collectio of procedures, linked together into a single large executable binary program, each procedure in the system is free to call any other one, if the latter provides some useufl computation that the former needs

-Being able to call any procedure you want is very efficient, but having thousands of procedures that can call each other without restriction may also lead to a system that is unwieldy and difficult to understand, also crash in any of these procedures will take down the entire OS

-There is essentially no information hiding, every procedure is visible to every other procedure

-Even in monolithic systems, it is possible to have some structure:

1. A main program that invokes the requested system procedure

2. A set of service procedures that carry out the system calls

3. A set of utility procedures that help the service procedures

-For each system call, there is one service procedure that takes care of it and executes it, utility procedures do things that are needed by several service procedures, such as fetching data from user programs

-Many OS support loadable extensions, such IO drivers and file systems, which are loaded in demand, in UNIX called shared libraries, in Windows, they are called DLLs

-Examples: CP/M, DOS

2) Layered systems: (figures)

-A generalization to structure of monoliths is to organize the OS as a hierachy of layer, each one constructed upon the one below it

-First system constructed in this was the THE system built by EW Dijkstra and his students, was a simple batch system for a Dutch computer

-The sytem had six layers:

5 : The operator

4 : User programs

3 : Input/output management

2 : Operator-process communicatino

1 : Memory and drum management

0 : Process allocation and multiprogramming

0: Processor allocation and multiprogramming:

-Dealth with allocation of the processor, switching between processes when interrupts occured or timers expired, above 0, the system consisted of sequential processes, each of which could be programmed without having to worry about the fact that multiple processes were running on a single processor

1: Allocated space for processes in main memory and on a 512K word drum used for holding parts of processes (pages) for which there was no room in main memory, above 1 processes did not have to worry about whether they were in memory or on the drump

2: Handled communication between each process and the operator console, on top of which each process effectively had its own operator console

3: Took care of managing the IO devices and buffering the information streams to and from them, above 3 each process could deal with abstract IO devices with nice properties

4: Where the user programs were found, did not have to worry about process, memory, console or IO management

5: Sytem operator was placed at layer 5

-A further generalization of the layering concept was present in the MULTICS system, instead of layers, MULTICS was described as having a series of concentric rings, with the inner ones being more privilged than the outer ones

3) Microkernels: (figure)

-Basic idea behind microkernel design is to achieve high reliability by splitting the OS up into small, well-defined modules, only one of which - the microkernel - runs in kernel mode and the rest run as relatively powerless ordinary user processes

-For putting as little as possible in kernel mode because bugs in the kernel can bring down the system instantly

-In particular, by running each device driver and file system as a separate user process, a bug in one of these can crash that component, but cannot crash the entire system, thus, a bg in the audio driver will cause the sound to be garbled or stop, but will not crash the computer

-They are dominant in real-time, industrial, avionices, and military applications that are mission critical and have very high reliability requirements, Integrity, K42, L4, PikeOS, Symbian, MINIX 3

-Outside the kernel, MINIX 3 is structured as three layers of processes all running in user mode,

-Lowest layer contains the device drivers, to program an IO device, the driver builds a structure telling which values to write to which IO ports and makes a kernel call telling the kernel to do the write, means that kernel can check to see that the driver is writing (or reading) from IO it is authorized to use, consequently a buggy audio driver cannot accidentally write on the disk

-Above the drivers is another user-mode layer containing the servers, which do most of the work of the operating system, one or more file servers manage the file system(s), the process manager creates, destroys, and manages processes and so on

1) Client-server model: (figure)

-A slight variation of the microkernel idea is to distinguish two classes of processes, the servers, each of which provides some service, and the clients, which use these services

-Communication between clients and servers is often by message passing, to obtain a service, a client process constructs a message saying what it wants and sends it to the appropriate service, the service then does the work and sends back the answer, if the client and server happen to run on the same machine, certain optimizations are possible

-An obvious generalization of this idea is to have the clients and servers run on different computers, connected by a local or wide-area network

-Increasingly many systems involve users at their home PCs as clients and large machines elsewhere running as servers, in fact, much of the Web operates this way, a PC sends a request for a webpage to the server and the webpage comes back

5) Virtual machines: (figure)

-The principle of virtualization is based on an astute observation that a timesharing system provides 1) multiprogramming and 2) an extended machine with a more convenient interface than the bare hardware, essence is to completely separte these two functions

-Heart of the system runs on the bare hardware and does the multiprogramming, providing not one, but several virtual machines to the next layer up, however, unlike all other oS, these virtual machines are not exteded machines, with files and other nice features, instead they are exact copies of the bare hardware including kernel/user mode, IO, interrupts and everything else the real machine has.

-Because each virtual machine is identical to the true hardware, each one can run any operating system that will run directly on the bare hardware, different virtual machines can, and frequently do, run different operating systems

-The special virtual machine monitor program is also known as type 1 hypervisor, next step in improving was to add a kernel module to do some of the heavy lifting, known as type 2 hypervisors

-In practice, the real distinction between a type 1 hypervisor and a type 2 hypervisor is that a type 2 makes uses of a host OS and its file system to create proceses, store files and so on, a type 1 has no underlying support and must perform all these functions itself

-Uses:

>Many companies see virtualization as a way to run their mail severs, FTP servers and other servers on the same machine without having a crash of one server bring down the rest

-Popular in web hosting world, a customer can rent virtual machine and rent whatever OS and software they want to, but at a fraction of the cost of a dedicated server

-For end users who want to be able to run two or more OS at the same time, say Windows and Linux

-Another area is somewhat different way, is running Java programs and equivalents

(okay all the talks about abstraction but how does it achieve it?) System calls: -System calls are the mechanism of interaction between user programs and the operating system whereby the user programs request a service from the OS, for example, creating, writing, reading, and deleting files -In a sense, making a system call is like making a special kind of procedure call, only system calls enter the kernel and procedure calls do not -Consdier the read system call, it has three parameters: the first one specifying the file, the second one pointing to the buffer, and the third one giving the number of bytes to read -Like nearly all system calls, it is invoked from C programs by calling a library procedure with the same name as the system call: read - count = read(fd, buffer, nbytes); -Returns the number of bytes actually read in count, is normally same as nbytes, but may be smaller, if, end-of-file is encountered while reading 1. C compilers push paramters onto the stack in reverse order then call the library procedure associated with read 2. The library procedure, possibly written in assembly, typically puts the sytem-call number in a place where the OS expects it, usch as a register 3. Then it executes a TRAP instruction to switch from user mode to kernel mode and start execution at fixed address within the kernel 4. Once it has completed its work, control may be returned to the user-space library procedure at the instruction following the TRAP instrucion 5. This procedure then returns to the user program in the usual way procedure calls return -POSIX is a family of standards specieid by the IEEE for maintaining compatiblity between operating systems specifies a family of precedural calls:

Shell Programming: -

Process: -A process is just an instane of an executing program, including the current values of the program counter, register, and variables -A program is something that may be stored on disk, not doing anything; while a process is an activity of some kind, that has a program, input, output and a state -Conceptually, each process has its own virtual CPU, in reality, the real CPU switches back and forth from process to process, such rapid switching of back and forth is called multiprogramming

>Creation:

-When an OS is booted, typically numerous processes are created, some of these are foreground processes that interact with users and perform work for them, others run in the background and are not associated with particular users, but instead have some specific function

-In addition, new processes can be created afterwards, often a running process will issue system calls -fork() in UNIX, to create one or more new processes to help it do its job

-When a new process is created, is assigned a unique process ID and inherits the memory image and resources of its parent process, but executes in a separate address space

-Usually, the child process then executes execve() to change its memory image and run a new program

>Termination:

-Most processes terminate because they have done their work, second reason for termination is that process discovers a fatal error, third is an error caused by the process, often due to program bug, or the fourth reason is that a process executes a system call telling OS to kill some process

-When a process terminates, it releases all the resources it was using, including memory, open files, and any other system resources. The operating system also releases the process's PID, making it available for use by other processes.

>Hierarchies:

-In some systems, when a process creates another process, the parent process and child process continue to be associated in certain ways

-In UNIX, just after the computer is booted, a special process, called init, is present in the boot image

-When it starts running, it reads a file telling how many terminals there are, then it forks off a new process per terminal

-These processes wait for someone to log in, if a login is successful, the login process executes a shell to accept commands

-These commands may start up more processes, and so forth, thus, all the processes in the whole system belong to a single tree, with init at the root.

-In contrast, Windows has no concept of a process hierarchy. All processes are equal, the only hint of a process hierarchy is that when a process is created, the parent is given a special token (called a handle) that it can use to control the child, however, it is free to pass this token to some other process, thus invalidating the hierarchy.

>States: (figure)

-In a multitasking computer system, processes may occupy a variety of states

1. Running: actually using the CPU at that instant

2. Ready: temporarily stopped to let another process run

3. Blocked: unable to run until some external event happens

-Four transitions are possible among these three states

1. Process blocks for input: occurs when OS discovers that a process cannot continue right now, in UNIX, when a process reads from a pipe or special file and there is not input available

2. Scheduler picks it: when all other processes have had their fair share and it is time for the first process to get the CPU to run again

3. Scheduler picks another process: when the scheduler decides that the running process has run long enough, and it is time to let another process have some CPU time

4. Input becomes available: when the external event for which a process was waiting happens

>Implementation of process switching: (ekdum hardware level ma najaa C programming ko level ma jaa)

-To implement the process model, the OS maintains a table, called the process table, with one entry per process, which contains important information about the process's state, including its program counter, stack pointer, memory allocation, status of its open files, its accounting and scheduling informatin, and other bookkeeping data structures

-Associated with IO class is a location called the interrupt vector that contains the address of the interrupt service

1. Suppose process 3 is running when a disk interrupt happens, user process 3's program counter, status word, and sometimes one or more registers are pushed onto the (current) stack by the interrupt hardware

2. Computer then jumps to the address specified in the interrupt vector, that is all hardware does

3. All interrupts start by saving the registers, often in the process table entry (PTE) for the current process, then the information pushed onto the stack by the interrupt is removed and the stack pointer is set to point to a temporary stack used by the process handler (Actions such as saving the registers and setting the stack pointer can not even be expressed in high-level languages as C, so they are performed by a small assembly-language routine)

4. When this routine is finished, it calls a C procedure to do the rest of the work for this specific interrupt type

5. When it has done its job, possibly making some process now ready, the scheduler is called to see who to run next

6. After that, control is passed back to the assembly-language code to load up the registers and memory map for the now-current process and start it running.