Philosophy of mathematics

The geometry you did in high school is the virtue of one man- Euclid of Alexandria. The man, in 300 BC, introduced five postulates and single handedly built the field of geometry on them. His work was so elegant that it took us 20 centuries to realize that tweaking of one of his postulate can lead to new ideas, what we now call the ‘non-Euclidean’ geometries. Following the philosophical footsteps of Euclid, David Hilbert introduced a program which was intended to be axiomatization of, not just of the new geometries, but of all of mathematics. It was certainly the hardest task a man had ever taken.

What real maths look like

The mathematicians working on the program needed to formalize the notion of ‘effectively calculable functions’ to describe what a ‘mathematical proof’ means. They had intuitive sense that the method or procedure to calculate such functions had to be mechanically feasible. All the naturally occurring functions: polynomials, rationals, trigonometrics, exponentials would fall under this class because if there is a natural phenomena related to a function, one could devise a calculable machine based on the physics of it. Beside these, there could be numerous other functions, some even unknown to mankind. To encapsulate all of them is a daunting task. The problem is essentially this:

If you give me a function f, I will, probably, tell you whether f should lie in the class S or not.

Give me a concise definition of the class S.

Such problems are what they encounter in ‘real life’ mathematics which gives you a lot of freedom to exercise your creativity. It is, in fact, exactly opposite to the ‘university’ way of doing mathematics:

Here is the definition of class S.

Will you tell me whether the function f falls on the class S?

I felt proud when I proved sin(x) to be continuous at x = 0 using ϵ-δ definition of continuity. But real genius was the guy who came up with it.

Now there are probably two ways you could approach this problem:

Define a class S and claim the procedures that calculate functions in S to be ‘effective methods’

Construct an ultimate mechanical machine ‘M’ and claim functions calculable by M to be ‘effectively calculable’

Rise of two young men A young Kurt Godel (o with double dot) preferred the first one. He formalized the definition of the class of general recursive functions: the smallest class of functions that would capture the intuitive notion of effectively calculable functions.

Another young man named Alan Turing used the second approach to come up with a mathematical (but mechanically possible) model of an ultimate machine that does nothing but manipulates symbols on a strip of tape according to a table of rules. The set of functions calculable by this machine, now called Turing machine, is precisely the class of general recursive function defined by Godel.

The equivalency of these definitions suggest a mechanical upper limit to the notion of ‘computability’. Note I am emphasizing on the ‘mechanicality’, for some words are in order when Turing machine gets quantum (that’s the story for another letter). Let’s get bold and define computability.

effectively calculable functions (informal notion) = general recursive functions (Godel’s definition) = ‘computable functions’ (formal notion)

effective methods or procedures (informal notion) = turing machine (Turing’s definition) = ‘algorithms’ (formal notion)

The Godel’s and Turing’s ideas act as the bridge between the informal and formal notion of effective calculability. Although this claim of computability has near-universal acceptance, it cannot be formally proven as the concept of effective calculability is only informally defined.

People have experimented with Turing machines, equipping it with multiple tapes, nondeterminism and so on. They could only come up with machines that compute faster or use less memory but they cannot compute more powerfully (i.e. more mathematical functions). Thus, the claim still stands, and will very likely keep standing.

Modern computers The ‘Universal Turing machine’ is a variant of the original Turing machine that simulates an arbitrary Turing machine on arbitrary input. The universal machine essentially achieves this by reading both the description of the machine to be simulated as well as the input to that machine from its own tape.

The Universal Turing machine seems to compute all that is possible. So, solving problems would be easier if people had such machines in their offices. Unfortunately such machines are hardly feasible, even if you could build them, programming such machines would be no easy task. In this context, programming would be to encode a Turing machine into a tape and feed it to the Universal Turing machine.

Turing himself and many authors investigated equivalent machines that could be brought into practice. Building upon the work on Turing, John von Neumann devised the concept of a stored program machine. As far as computational capability, Turing machines and von Neumann machines are equivalent. Either one can emulate the other. The modern digital computers are just sophisticated von Neumann machines. Charles Babbage is said to have anticipated the idea a century earlier.

So, if any, modern computer science has three full fathers.

Non-computability The natural question to ask is ‘what are those functions that are out of reach of mechanical computation?’. The answer is the following decision problem:

f(M, w) = Given a Turing machine M, will it halt on the input w?

This function and other functions reducible to it are uncomputable. That is to say, there is no algorithm which will tell you whether the given Turing machine will halt on an arbitrary input. You could devise a hypothetical oracle that would possibly answer that question, but again there would be no recipe to construct it mechanically.

Are you Turing complete? In general when people talk about models that are near the Turing machines, they use following terminologies:

A model is Turing-complete if it is at least as powerful as Turing machines.

A model is Turing-equivalent if it is exactly as powerful as Turing machines.

An example of a Turing-complete system which is not Turing equivalent is Turing machines with oracle access to an oracle for the halting problem. The Turing equivalency or completeness does not necessarily mean that you can build mechanical version of such models. The physically implementable Turing-complete systems are Turing-equivalent, which aids our claim of computability.

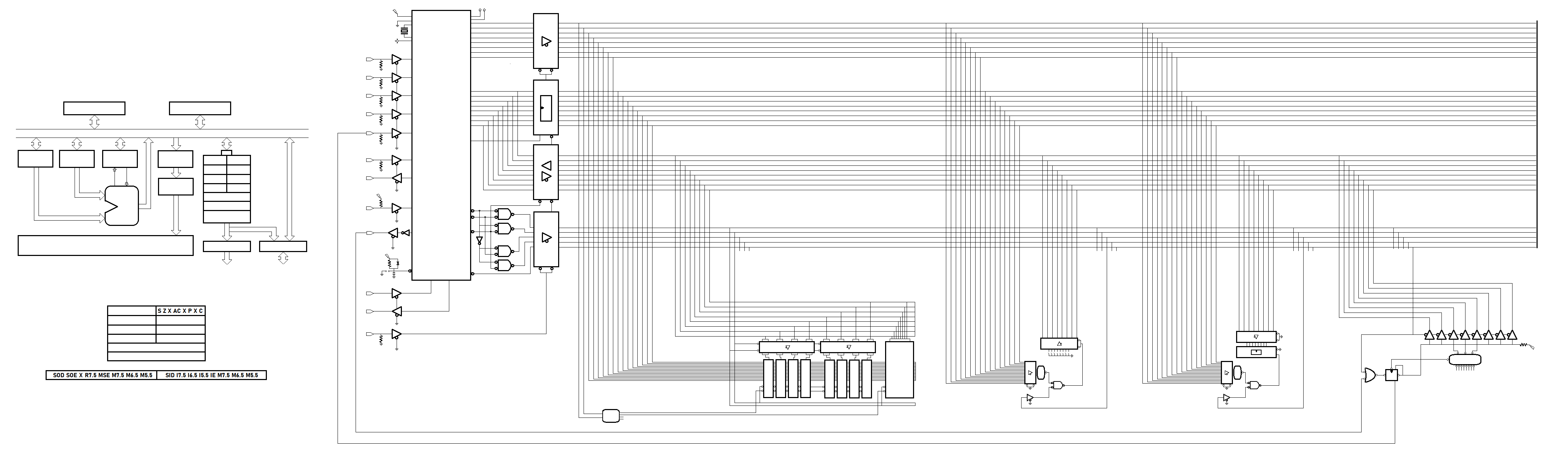

Powerful than a Turing machine The modern digital computers are, in a form of abstraction, von Neumann machines. However, the actual physical devices are built from electronics circuits. The circuit model which consists the multiset of universal gates such as {AND, NOR, NOT} are Turing equivalent and nearer to real computers.

Furthermore, if you allow the circuits to have to be ‘infinite’ they can solve the halting problem. So, infinite circuits are in some sense ‘too powerful’ but they’re not realistic.

What else are Turing complete? To show that something is Turing-complete, it is enough to show that it can be used to simulate some Turing-complete system. For instance, an imperative language like C is Turing-complete if it has conditional branching (e.g., ‘if’ and ‘goto’ statements) and the ability to change an arbitrary amount of memory. If the limitation of finite memory is ignored, most modern programming languages are otherwise Turing-complete. Some softwares are Turing-complete by accident, i.e. not by design include Microsoft Powerpoint, Microsoft Excel, x86 MOV instruction.

Practical computability Turing machines are also helpful in establishing the notion of effective computability i.e. the set of problems that are solvable practically with limited resources. The resource DTIME, which is the computation time for a deterministic Turing machine, is used to define complexity classes, sets of all of the decision problems which can be solved using a certain amount of computation time. If a problem of input size n can be solved in O(f(n)), we have the complexity class DTIME(f(n)).

The well-known complexity class P comprises all of the problems which can be solved in a polynomial amount of DTIME.

A much larger class using deterministic time is EXPTIME, which contains all of the problems solvable using a deterministic machine in exponential time.

The complexity class NTIME(f(n)) is the set of decision problems that can be solved by a non-deterministic Turing machine which runs in time O(f(n)).

The NP is the set of decision problems can be solved in polynomial time by a nondeterministic Turing machine in polynomial time or alternatively have proofs verifiable in polynomial time by a deterministic Turing machine.

Million dollar question Since we have already established that a simple Turing machine can simulate the nondeterministic Turing machine in totality (regardless of the resources), isn’t it obvious that P = NP? I mean you just add the resource constraint into the equation. Rephrasing the question:

Can a polynomially bounded Turing machine simulate the steps of polynomially bounded non-deterministic Turing machine in polynomial time? Or,

Can a problem verifiable in polynomial time is also solvable in poynomial time?

It turns out that our civilization is not yet capable of answering such questions. Nevertheless we have managed to establish a set of specific problems called NP-hard such that, finding a polynomial time algorithm to solve any NP-hard problem would give polynomial time algorithms for all the problems in NP. Hence prove P=NP. An equivalent definition is to require that every problem in NP can be solved in polynomial time by an oracle machine capable of solving a NP-hard problem.

Some NP-hard problems are themselves in NP, such are called NP-complete, like the infamous 3SAT while there are decision problems that are NP-hard but not NP-complete such as the halting problem.

Two kinds of paralleism in applications:

-Data level parallelism (DLP) arises because many items can be operated on at same time

-Task level (TLP) tasks of work are created that can operated independely and in parallel

Two kinds of paralleism in applications:

-Data level parallelism (DLP) arises because many items can be operated on at same time

-Task level (TLP) tasks of work are created that can operated independely and in parallel

Computer hardware in turn exploit these two kinds of applciation in four major ways;

-Instruction level: pipelining using compiler help, speculative execution

-Thread level: interaction between parallel threads

-Multimedias, vectors and GPUs: apply single instruction to collection of data

-Request level: explits parallelism among largely decoupled tasks specified by OS or programmer???

Trends in technology: Five technology crucial to architecture -IC, semiconductor DRAM, semiconductor Flash, magnetic, network

Trends in power and energy of IC: -For CMOS chip, the power of logic transisiion is: Pdynamic propto= Capacitive * Voltage ^2 * Frequency (for energy remove frequency term) -Capactiive load is a function of the number of transistors connected to an output -A 4GHZ intel core i7-6700K consumes 95W (some mups do overclocking) -For static power, is propto device connected: Pstatic propto= Current * Voltage

Trends in cost: -Cost of IC = yield * (cost of die + cost of testing die + cost of packaging and final test) -Cost of die = yield * (cost of wafer / dies per wafer) which is highly sensitive to die area

Principles: -Take advantage of property of programs: locality and parallelism

CPU time = * instructions per program (isa and compiler) | quite out of hand for now

* clock cycles per instructions (organiz nd isa)

* inverse of frequency essentially (hardware and isa)

>Goal to reduce CPI as much as possible of which frequency is by product decision

TRANSISTOR TECHNOLOGIES: -All the fukcing way of making shits

MEMORY HIERARCHY AND TECHNOLOGY: -Principle of locality, space and time + economics + physics = memory hierarchy -A modern core i7 6700 generates two data memory refererences per core each clock cycle, with four core and a 4.2GHz the i7 can generate a peak of 32.8 billion data memory references per second, in addition to a peak instruction demand of about 12.8 billion 128 bit instruction refernces; total bandwidth of 409.6GiB/s

1. Registers:

-Multiple ports CMOS

-typical sizes of 4KiBs managed by compiler itself

-Access tim eof 0.1 to 0.2 ns

-Bandwidth of 1,000 GB/s to 10,000 GB/s

2. Caches:

-Onchip CMOS SRAM

-typical sizes of 32KiBs to 8MiBs

-Access time of 0.5 to 10 ns

-20GB/s to 50GB/s

3. Memory:

-CMOS DRAM

-10 to 30GB/s of bandwidth

-Access time for 30-150 ns

-Typical size of < 1TB

4. Disks:

-Magnetic disks

-Access time of 5,000,000 ns

-Bandwidth of 0.1GB/s to 1GB/s

CACHES:

-Twakka surumai rakhinxa ani block ma transfer garinxa cache from/to memory bhanni kura ta thaha bhaihalyo, suruma cache ma hernu paryo ani bhetiyo bhane hit natra miss bhayera memory bata leuna paryo

-The CPI can be written as:

CPI = (CPI for cpu clock cyles + CPI for memory stall cycles)

where,

CPI memory stalls = misses per instructions * penalty

= memory accesses per inst * miss rate * penalty

1. Block placement: How the block be placed in the upper level?

>Direct: (Block address) MOD (No. of blocks in cache)

>Fully assoic: Anywhere in the cache

>Set associative: Cache divided into sets each containing blocks

2. Block identififi: How is a block found if it is in the upper level?

>| Block address | Block offset |

>| Tag | Index | Block offset |

-Go to the set told by the index and do the associative search there, if direct mapped one block per set, if fully then all blocks in a set, index = log2(no of sets in the cache)

-Increasing associativity increases the number of blocks per set thereby decreasing the size of index and increasing the size of the tag, ta-index boundary moves to the right with increasing associativity

3. Block replacement: Which block on upper lvl should be replaced on a miss?

-Which of the blocks from the set need be replaced when another block from memory needs its place? since only one block in direct mapped no decision making needed

>Random: not so bad as you think

>Least recently: quite complicated ngl

>First in, first out: approximation as assumes first in = LRU

4. Block write strategy: What happens on a write hit or miss both considerations?

>Write through: data coherency; act like read misses

>Write back: uses less memory bandwidth; modified only in lower memory

Cache enry:

>| Valid | Tag | Reference | Dirty | ... | Data block |

[Dont live as a entry entry but point to]

OPTIMIZATIONS:

-Reducing miss rate

-Reducing time to hit the cache

-Reducing miss penalty

-Consider complexity

-Take power as a factor

-Bandwidth majorly depends on technology

Improve rate:

>Larger cache size: time, power

>Larger block size: penalty

>Higher associativity: complexity

Usual cache size = 8KB, block size = 64 bytes, associativity = 2 or 4s

Access time:

>Avoid address translation

-To use virtual or physical address to index the cache and whether a virtual or physical address is used in the tag comparison

-Use full virtual but that means

-no protection information check

-flush cache on process switching

-multiple instances of same memory block

-An alternative is use the part that is identical in both virtual and physical addresses to index the cache which allows the cache read to begin immediately and yet the tag comparison is still with physical addresses

-Limitations is that a direct-mapped cache can be no bigger than the page size

Reduce penalty by:

>Multilevel caches at the cost of complexity

-The first level cache small to match the clock cycle of fast processor, yet second large enough to capture accesses

-Penalty L1 = Hit time L2 + Miss rate L2 * Miss penalty L2

-All the memory considerations of memory apply here

-Another consideration concerns whether data in first level are in inclusion to lower levels

-Multilevel inclusion means L1 data are always present in L2 is desirable for consistency

-Multilevel exclusion allows varying block sizes miss in L1 results in a swap of blocks between L1 and L2 instead of a replacement

Others:

-Pipelined access and multibanked caches to increase bandwidth

-Nonblocking caches for out of order execution to increase bandwidth

-ETC.

Compiler technologies:

Algorithms: analysed in terms of of the number of block fetches

-Need algorithms to use a block to its fullest when it is brought from lower to higher memory

-Say two levels only: L1 cache and others or equivalents then goal is to minimize the no. of memory transfers to the lower memory

-Need to explicitly manage the cache size M and block size B

-A cleaner model is called oblivious which does not know B or M

VIRTUAL MEMORY:

-Relieves programmers to manage the two levels of memory hierarchy presented by main memory and secondary storage

-OS stuffs needs here this only for caches

For memory-disk movement done by the OS with support form hardware:

1. Block placement: Anywhere OS wants

2. Block identififi: Through TLB

3. Block replacement: LRUs

4. Block write strategy: Write back

Usual memory sizes = 8GBs, page size = 4KBs

>TLB is a cache so everything applies

SRAM technology:

-SRAMs dont need to refresh so the access time is ver close to the cycle time, you know the circuit

-On chip cache SRAMs (state of art) are normally organized with a width that matches the block size of teh cache with the tags stored in parallel to each block

-Allows an entire block to be read out or written into a single cycle

DRAM technology:

-You know the circuit for an element of DRAM, right?

-One bit is one transistor with a trench capacitor

-A DRAM cell array consits of a row and column decoder to minimize the circuitry

-A matrix of DRAM cell provide N*1 bit storage per cycle fetched in row buffer

-Banking enables parallel matrices each having their own buffer

-Multiple banks go into a chip to get say 8-bit at once

-Now 16 of those chips form a rank of which DIMM consists of two

-A 64-bit data gets divided into 8 16-bits chunk each of which go to individual chips on rank after which to individual banks

REAL STUFFS:

Intel i7 (some real info)

Processor:

-x86-64 ISA can execute upto four instructions per cycle using a multiple issue, dynamically scheduled, 16 stage pipeline

Main memory:

-Supports upto three memory channels 3*64 bits each consisting of separate DIMMs and each of which can transfer in paralle

-With DDR4 memory can provide a peak memory bandwidth of upto 45 GB/s (while peak CPU requirement is 400+GB/s)

Addresses:

-48-bit virtual addresses and 36-bit physical addresses

Virtual address:

|...|Global-dir(9)|Upper-dir(9)|Middle-dir(9)|Page-table(9)|Offset(12)|

TLB Entry:

|Caching disabled?|Refernced?|Modified?|Protection?|Present/absent?|Tag(32)|Physical address(24)|

TLB sizes:

>ITLB: 8-way 128 PTEs in 8 banks

>DaTLB: 4-way 64 PTEs in 4 banks

>L2 unified: 1536 PTEs in 12 banks

Cache sizes:

>Block size = 64 bytes

L1 cache: 8-way 32KiB in 8 banks

L2 unified cache: 4-way 512 blocks in 8 banks

L3 shared caches: 16-way 4K blocks in 4 banks

>Pseudo LRU, Write-back

>Penalises 4, 12 and 44 clock cycles respectively

>L1 multibanked and pipelined, each nonblocking etc.

[Why they have separate data and instruction caches? pipeline issue]

PIPELINING: -Better done in overleaf probably

BACK TO PROGRAMMING: -We know pipelining, scheduling and superscalarity are not ours to handle so we will do caches, multithreading, multicore, vectors

CACHE: >We analysze in terms of number of blocks that are brough from memory to cache

# Cache memory mode:

-Following paramters:

N = number of data items in the problems

B = number of data items in a cache block

M = number of data items in the cache

a = associativity of the cache

and the performance measures:

- the number of cache misses

- the number of instructions

>Assume LRU eviction mechanism

[yesma chai dui level assume garne tara cache memory ho ki memory disk ho nabhanni rey, ani testo garda whole memor hierarchy ko lagi optimize hunxa]

Following assumptions:

-Optical replacement, furthest in future wala

-Inclusion property like cache and memory

-Full associaitbiy

-Automatic replacmeent, done by harware in LRU fasihon

Design tools:

1. Analysize scanning:

-A simple algorithm for finding the sum of elements in an array:

for i in range(N):

r += A[i]

-A scan of an array will at most load O(N/B) buffer loads

-In exact its ceil(N/B)+1 misses

2. Transposition:

for (i = 0; i < N; ++i)

for (j = i+1; j < N; ++j)

swap(A[i][j], A[j][i])

which is O(N^2)

an improvement is:

2. Matrices multiplication:

-For the sake of easiness lets assume that they are cache aligned and that each row fits in a column even if we are wrong we will be off by 1

-Let Z = X*Y where X is row major and Y is column major so finding an element in Z is a two scans across the X and Ys gives the cost of O(N^2/B)

for i in range(N):

for j in range(N):

r = 0

for k in range(N):

r += A[i][k]*A[k][j]

A[i][j] = r

-With the following assumption in mind, unless the Y blocks fits in the cache totally then we are good cool it takes total in how many blocks are there in the all of three matrices as they all can stay in the cache at one time which is usually not a good assumption

-Instead of operating on entire rows or columns of an array blocked algorithms operate on submatrices or blocks with goal to maximize the access to the data loaded into the caceh before the data are replace

for jj in range(0,N,B):

for kk in range(0,N,B):

for i in range(N):

for j in range(jj+B):

r = 0

for k in range(kk+B):

r = r + x[i][k]*y[k][j]

x[i][j] = x[i][j] + r

[Later with GPU though]

Memory

Physics ra economics le garda dherai types ko memory hunxan (ekchoti BJT, JFET, MOSFET everything revise garnu xa sararararara)

NONVOLATILES:

ROM: -Sab rom ma decoder hunxa jasbata word line decode bhayera niskinxa (so input to decoder is address and output is word line), each word line ko through memory elements hunxa jun chai tala bits line ma convert hunxa so address goes into decoder to become multiple words and each word line generate multiple bit lines which could be on or off -Initially sab 1’s hunxan, program garnu bhaneko teslai 0 banaunu ho -So k address lines: ROM size = 2^K*N; Outputs = n lines; and one enable -(Picture visualize nabhaye samma dont go agadi) -Across are the word line and down are the bit lines

Fuse ROM: -Fuse are melted with high current to program 0 which were initially 1 -Good fuse = 1, melted fuse = 0

Mask ROM: -Transistor fabricate hunxa ya hudaina (or transistor rakhni tara gate conenct garni ki nai bhanni kura ho) -Gate on the word line and source in the bit line -No transistor = 1, fabricated transistor = 0 -So the thing was: decoder ko output le transistor lai on gardinxa -To read you put 5V on the word line and bit line ko mathi bata ta normal voltage aauni nai bhayo

EPROM: -Need a mechanism such that we could increase the threshold voltage by something called floating gate -For some time put high voltage in gate then floating gate sucks out electrons -Floating gate ma trap garera rakhxa electrons lai jun for long years trap huni bhako le high permanence, erase garni bela chai quartz window bata UV ray pass garni jasle garda trapped electrons release hunxan -To program you have to apply high voltage like 20 V into it (high on gate, low on drain) -To erase take it to sun, which erases whole -Another way to erase could be to high on drain but low on gate but no possible but when you increase drain voltage through bit line but that line is connected to all the transistor in the line

EEPROM: -yo chai alli confusing xa, jasma chai euta word line duita word line bata replace hunxa say w and w’; -duita transistor hunxa select and EPROM ko jasto transistor, the purpose of select transistor is to erase one bit only which was not possible in EPROM, to program put high voltage in gate voltage then select only the bit which you want to erase by using using w and w’ -To read same you put 5V into the w wala line which is of normal transistor -To program you

Flash memory: -Extension to EEPROM where another way to erase bhako wala, EPROM ma nahuna sakxa ni ta testai tarika le fabricate gareko huna saka

VOLATILES:

RAM: -Configuration nai weird xa yar, X-decoder bata aauxa ani euta word select hunxa jasle euta row lai select garni bhayo, ani euta row ma ta multiple units hunxan, ROM ma k hunthyo ta bhanda, tyo row bata sab units haru aauthey, aba chai euta Y-decoder hunxa jasle balla from that one euta unit lai select garxa ani balla euta SINGLE unit IO lines ma janxa/aauxa -(Yo X ra Y decoder wala configuration ta optimized version ho, general configuration ROM ko jastai hunxa) -Called coincident decoding to minimize size of decoder and also allow multiplexing -For example, instead of using a single 10 x 1,024 decoder, we use two 5 x 32 decoders. With the single decoder, we would need 1,024 AND gates with 10 inputs in each. The five most significant bits of the address go to input X and the five least significant bits go to input Y. Each word within the memory array is selected by the coincidence of one X line and one Y line. Thus each word in memory is selected by the coincidence between 1 of 32 rows and 1 of 32 columns, for a total of 1,024 words. -So k address lines; RAM size = 2^k*n; outputs = n lines; one enable and one r/w

DRAM: -Euta transistor ra trench capacitor -Word line lai activate garera bit line ma current rakhni bhane capacitor charge hunxa read garni bhane charge aauna sakxa so read and write through same line

SRAM: -Unit configuration is of two NOT gates feeding each other, the two select transistor activated by the word line and thei respective drain going into the input of the NOT gates and drain goes into data and data’ line, sense amplifier bata if data > data’ garera voltage lina ni sakinxa