Data mining is the process of extracting and discovering useful information and patterns in large data repositories. We live in a world where vast amounts of data are collected daily. Analyzing such data is an important need.

Not all information discovery tasks are considered to be data mining. For example, looking up individual records using a database management system or finding particular Web pages via a query to an Internet search engine are tasks related to the area of information retrieval.

Data rich information poor situation.

*Challenges?

- Scalability

- High dimensionality

- Heterogeneous and complex data:

- Data ownership and distribution

- Non-traditional analysis:

Steps?

- Data cleaning: to remove noise and inconsistent data

- Data integration: where multiple data sources may be combined

- Data selection: where data relevant to the analysis task are retrieved from the database

- Data transformation: where data are transformed and consolidated into forms appropriate for mining by performing summary of aggregation operations

- Data mining: an essential process where intelligent methods are applied to extract data patterns

- Pattern evaluation: to identify truly interesting patters representing knowledge based on interestingness measures

- Knowledge representation: where visualization and knowledge representation techniques are used to present mined knowledge to user

Steps 1 through 4 are different forms of data preprocessing, where data are prepared for mining. The data mining step may interact with the user or a knowledge base. The interesting patterns are presented to the user and may be stored as new knowledge in the knowledge base.

Datasets

Data sets are made up of data objects. A data object represents an entity - in a sales database, the objects may be customers, the objects may be customers, store items, and sales; in a medical database, the objects may be patients; in a university database, the objects may be students, professors, and courses.

Data objects are typically described by attributes. If the data objects are stored in a database, they are data tuples. That is, the rows of a database correspond to the data objects, and the columns correspond to the attributes.

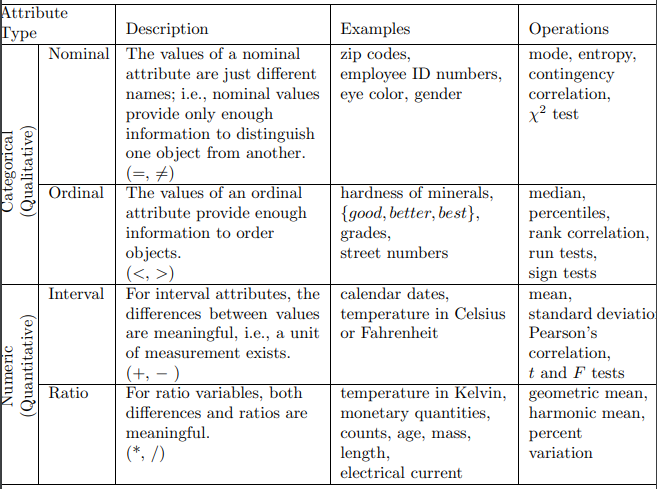

An attribute is a data field, representing a characteristic or feature of a data object. Observed values for a given attribute are known as observations. A set of attributes used to describe a given object is called an attribute vector (or feature vector).

-

Nominal attributes: The value of nominal attribute are symbols or name of things. Each value represents some kind of category, code, or state, and so nominal attributes are also referred to as categorical.

-

Binary attributes, is a nominal attribute with only two categories or states: 0 or 1, where 0 typically means that the attribute is absent, and 1 means that it is present.

-

Ordinal attributes: An ordinal attribute is an attribute with possible values that have a meaningful order or ranking among them, but the magnitude between successive values is not known.

-

Numeric attributes: A numeric attribute is quantitative; that is, it is a measurable quantity, represented in integer or real values.

Data visualization

Data visualization aims to communicate data clearly and effectively through graphical representation. More popularly, we can take advantage of visualization techniques to discover data relationships that are otherwise not easily observable by looking at the raw data.

Graphical Plots

…

Similarity and Dissimilarity

In data mining applications, we need ways to assess how alike or unalike objects are in comparison to one another.

Suppose that we have objects described by attributes also called measurements. The objects may be tuples in a relational database, and are also referred to as feature vectors. The Dissimilarity matrix stores a collection of proximities that are available for all pairs of objects. It is often represented by an n-by-n table: